�����ҵ��ʵ�ܵ���˵�����˺ܳ�ʱ�䣬��Ҫ���Լ����ܼ���ȥд���룬�ڶ��ǻ���֪ʶ�ܶ��ʵ���ܶ���Ҫ�飬�����Ҳ�Ҳ��������Ҿͼ�¼һ���÷�����Ҫ������Ҫ���á�ÿ�ζ�С��һ�¡�

ǰ��һЩ����ĵ㣺

s["dW" + str(l+1)] = beta2 * s["dW" + str(l+1)] + (1-beta2)* np.square(grads["dW" + str(l+1)])#s["db" + str(l+1)] = beta2 * s["db" + str(l+1)] + (1-beta2)* math.pow(grads["db" + str(l+1)],2) ���� v_corrected["dW" + str(l+1)] = v["dW" + str(l+1)] / (1 - np.power(beta1,t))

#v_corrected["db" + str(l+1)] = v["db" + str(l+1)] / (1 - math.pow(beta1,l)) �����µ�д����

s["dW"+str(l+1)]=np.zeros((parameters["W"+str(l+1)].shape[0],parameters["W"+str(l+1)].shape[1]))s["db" + str(l+1)] = np.zeros_like(parameters['b'+ str(l+1)])��ѧ�ĺ�����

numpy�е�ravel()��flatten()��squeeze()���÷������� https://blog.csdn.net/tymatlab/article/details/79009618

�μ��ٷ��ĵ���

- ravel()

- flatten()

- squeeze()

Python Numpyģ�麯��np.c_��np.r_ѧϰʹ�� https://blog.csdn.net/Together_CZ/article/details/79548217

- np.r_�ǰ����������������ǰ�������������ӣ�Ҫ��������ȣ�������pandas�е�concat()

- np.c_�ǰ����������������ǰ�������������ӣ�Ҫ��������ȣ�������pandas�е�merge()

5 - Model with different optimization algorithms

Lets use the following "moons" dataset to test the different optimization methods. (The dataset is named "moons" because the data from each of the two classes looks a bit like a crescent-shaped moon.)

train_X, train_Y = load_dataset()print(train_X.shape[0],train_X.shape[1])

print(train_X[:,0])

print(train_X[0,:])

print(train_Y.shape[0],train_Y.shape[1])

print(train_Y)

print(train_Y.flatten())

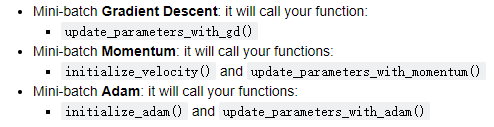

We have already implemented a 3-layer neural network. You will train it with:

def model(X, Y, layers_dims, optimizer, learning_rate = 0.0007, mini_batch_size = 64, beta = 0.9,beta1 = 0.9, beta2 = 0.999, epsilon = 1e-8, num_epochs = 10000, print_cost = True):"""3-layer neural network model which can be run in different optimizer modes.Arguments:X -- input data, of shape (2, number of examples)Y -- true "label" vector (1 for blue dot / 0 for red dot), of shape (1, number of examples)layers_dims -- python list, containing the size of each layerlearning_rate -- the learning rate, scalar.mini_batch_size -- the size of a mini batchbeta -- Momentum hyperparameterbeta1 -- Exponential decay hyperparameter for the past gradients estimates beta2 -- Exponential decay hyperparameter for the past squared gradients estimates epsilon -- hyperparameter preventing division by zero in Adam updatesnum_epochs -- number of epochsprint_cost -- True to print the cost every 1000 epochsReturns:parameters -- python dictionary containing your updated parameters """L = len(layers_dims) # number of layers in the neural networkscosts = [] # to keep track of the costt = 0 # initializing the counter required for Adam update ��ʼ��Adam��������ļ�����seed = 10 # For grading purposes, so that your "random" minibatches are the same as ours# Initialize parametersparameters = initialize_parameters(layers_dims)# Initialize the optimizerif optimizer == "gd":pass # no initialization required for gradient descentelif optimizer == "momentum":v = initialize_velocity(parameters)elif optimizer == "adam":v, s = initialize_adam(parameters)# Optimization loopfor i in range(num_epochs):# Define the random minibatches. We increment the seed to reshuffle differently the dataset after each epoch#���������С��������������������ÿ����Ԫ֮���Բ�ͬ�ķ�ʽ����ϴ�����ݼ�seed = seed + 1minibatches = random_mini_batches(X, Y, mini_batch_size, seed)for minibatch in minibatches:# Select a minibatch(minibatch_X, minibatch_Y) = minibatch# Forward propagationa3, caches = forward_propagation(minibatch_X, parameters)# Compute costcost = compute_cost(a3, minibatch_Y)# Backward propagationgrads = backward_propagation(minibatch_X, minibatch_Y, caches)# Update parametersif optimizer == "gd":parameters = update_parameters_with_gd(parameters, grads, learning_rate) #�ݶ��½��㷨��С������elif optimizer == "momentum":parameters, v = update_parameters_with_momentum(parameters, grads, v, beta, learning_rate)elif optimizer == "adam":t = t + 1 # Adam counterparameters, v, s = update_parameters_with_adam(parameters, grads, v, s,t, learning_rate, beta1, beta2, epsilon)# Print the cost every 1000 epochif print_cost and i % 1000 == 0:print ("Cost after epoch %i: %f" %(i, cost))if print_cost and i % 100 == 0:costs.append(cost)# plot the costplt.plot(costs)plt.ylabel('cost')plt.xlabel('epochs (per 100)')plt.title("Learning rate = " + str(learning_rate))plt.show()return parametersYou will now run this 3 layer neural network with each of the 3 optimization methods.

5.1 - Mini-batch Gradient descent

Run the following code to see how the model does with mini-batch gradient descent.

# train 3-layer model

layers_dims = [train_X.shape[0], 5, 2, 1]

parameters = model(train_X, train_Y, layers_dims, optimizer = "gd")# Predict

predictions = predict(train_X, train_Y, parameters)

print(predictions)

# Plot decision boundary

plt.title("Model with Gradient Descent optimization")

axes = plt.gca() #gca=get current axis

axes.set_xlim([-1.5,2.5])

axes.set_ylim([-1,1.5])

# print(x.T)

plot_decision_boundary(lambda x: predict_dec(parameters, x.T), train_X, train_Y.flatten())

5.2 - Mini-batch gradient descent with momentum

Run the following code to see how the model does with momentum. Because this example is relatively simple, the gains from using momemtum are small; but for more complex problems you might see bigger gains.�������´��룬�鿴ģ����δ�����������Ϊ���������Լ�ʹ��momemtum�������С;�����ڸ����ӵ����⣬����ܻῴ����������档

# train 3-layer model

layers_dims = [train_X.shape[0], 5, 2, 1]

parameters = model(train_X, train_Y, layers_dims, beta = 0.9, optimizer = "momentum")# Predict

predictions = predict(train_X, train_Y, parameters)# Plot decision boundary

plt.title("Model with Momentum optimization")

axes = plt.gca()

axes.set_xlim([-1.5,2.5])

axes.set_ylim([-1,1.5])

plot_decision_boundary(lambda x: predict_dec(parameters, x.T), train_X, train_Y.flatten())

5.3 - Mini-batch with Adam mode

Run the following code to see how the model does with Adam.

# train 3-layer model

layers_dims = [train_X.shape[0], 5, 2, 1]

parameters = model(train_X, train_Y, layers_dims, optimizer = "adam")# Predict

predictions = predict(train_X, train_Y, parameters)# Plot decision boundary

plt.title("Model with Adam optimization")

axes = plt.gca()

axes.set_xlim([-1.5,2.5])

axes.set_ylim([-1,1.5])

plot_decision_boundary(lambda x: predict_dec(parameters, x.T), train_X, train_Y.flatten())

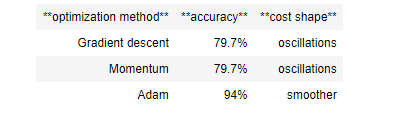

5.4 - Summary

Momentum usually helps, but given the small learning rate and the simplistic dataset, its impact is almost negligeable. Also, the huge oscillations you see in the cost come from the fact that some minibatches are more difficult thans others for the optimization algorithm.

Adam on the other hand, clearly outperforms mini-batch gradient descent and Momentum. If you run the model for more epochs on this simple dataset, all three methods will lead to very good results. However, you've seen that Adam converges a lot faster. ����ͨ�����а����ģ����ǿ��ǵ���С��ѧϰ�ٶȺͼ����ݼ�������Ӱ�켸���ǿ��Ժ��Եġ����⣬���ڳɱ��п����ľ�������������һ����ʵ����һЩС�����������Ż��㷨�����ѡ���һ���棬Adam��������С�����ݶ��½��Ͷ��������������������ݼ�������ģ������ʱ�䣬��ô�����ַ������������dz��õĽ�������������Ѿ�����Adam�����ø����ˡ�

Some advantages of Adam include:

- Relatively low memory requirements (though higher than gradient descent and gradient descent with momentum)

- Usually works well even with little tuning of hyperparameters (except ����) Adam��һЩ�ŵ����: ��Խϵ͵��ڴ�����(��Ȼ���ݶ��½��ʹ��������ݶ��½�Ҫ��) ͨ������ʹ���ٵ���������Ҳ�ܺܺõع���������ѧϰ�ʣ�

References:

- Adam paper: https://arxiv.org/pdf/1412.6980.pdf