本文均属自己阅读源码的点滴总结,转账请注明出处谢谢。

欢迎和大家交流。qq:1037701636 email:[email protected]

Android源码版本Version:4.2.2; 硬件平台 全志A31

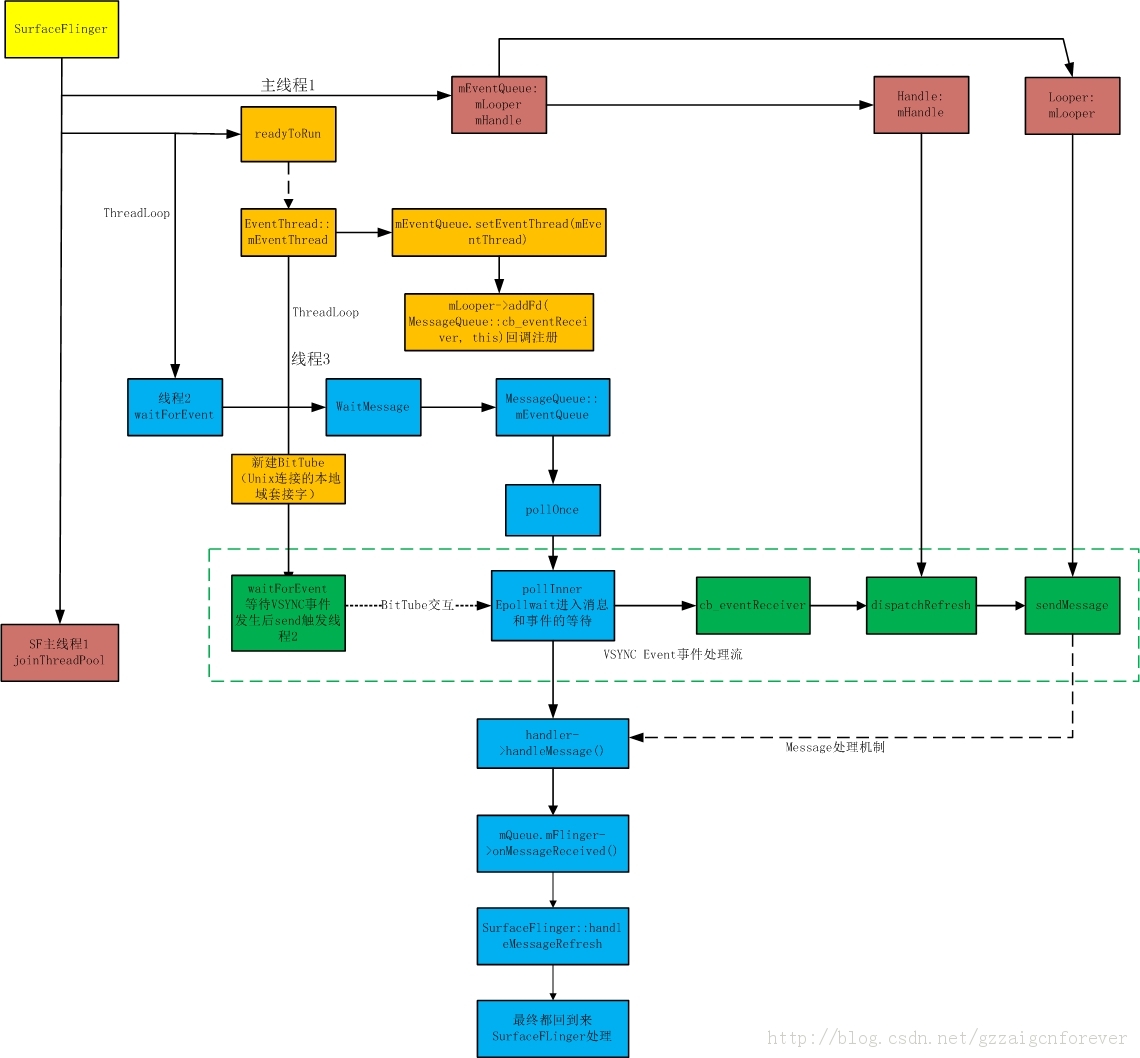

在这篇博文将会和大家一起分享我所学到的一点SurfaceFlinger中的事件和消息处理机制。

在前面的博文中,可以发现在SurfaceFlinger中的OnFirstRef里面有如下函数:

void SurfaceFlinger::onFirstRef(){ mEventQueue.init(this); run("SurfaceFlinger", PRIORITY_URGENT_DISPLAY);//启动一个新的thread线程,调用thread类的run函数 // Wait for the main thread to be done with its initialization mReadyToRunBarrier.wait();//等待线程完成相关的初始化}

step1: mEventQueue.init(),事件队列MessageQueue初始化

void MessageQueue::init(const sp<SurfaceFlinger>& flinger){ mFlinger = flinger; mLooper = new Looper(true);//新建一个消息队列,其内部为建立一个epoll的pipe mHandler = new Handler(*this);//新建消息处理句柄}这里看到了两个类Looper和Handler,听说这两个类一般是用来构成消息处理机制,下面就看看他的复杂性吧。

step2:Looper对象的新建

{ int wakeFds[2]; int result = pipe(wakeFds); LOG_ALWAYS_FATAL_IF(result != 0, "Could not create wake pipe. errno=%d", errno); mWakeReadPipeFd = wakeFds[0]; mWakeWritePipeFd = wakeFds[1];//新建管道,2个fd描述符 result = fcntl(mWakeReadPipeFd, F_SETFL, O_NONBLOCK); LOG_ALWAYS_FATAL_IF(result != 0, "Could not make wake read pipe non-blocking. errno=%d", errno); result = fcntl(mWakeWritePipeFd, F_SETFL, O_NONBLOCK); LOG_ALWAYS_FATAL_IF(result != 0, "Could not make wake write pipe non-blocking. errno=%d", errno); // Allocate the epoll instance and register the wake pipe. mEpollFd = epoll_create(EPOLL_SIZE_HINT);//epoll关注EPOLL_SIZE_HINT的文件数 LOG_ALWAYS_FATAL_IF(mEpollFd < 0, "Could not create epoll instance. errno=%d", errno); struct epoll_event eventItem; memset(& eventItem, 0, sizeof(epoll_event)); // zero out unused members of data field union eventItem.events = EPOLLIN; eventItem.data.fd = mWakeReadPipeFd;//监听可读管道mWakeReadPipeFd result = epoll_ctl(mEpollFd, EPOLL_CTL_ADD, mWakeReadPipeFd, & eventItem);//注册上述的信息,用于epoll_wait LOG_ALWAYS_FATAL_IF(result != 0, "Could not add wake read pipe to epoll instance. errno=%d", errno);}上述代码分别完成了新建一个管道pipe,使用epoll_create和epoll_ctrl来完成对读管道的监听,看是否有数据写入写管道。

step3:在SF新启动线程的readyToRun里面有mEventThread = new EventThread(this);//新建一个事件线程用于创建event的消息,处理VSYC同步事件。

EventThread这里姑且理解为事件线程,他继承了thread类,故会启动一个新的线程,先走完当前线程函数。主要用于处理VSYC的同步事件

EventThread::EventThread(const sp<SurfaceFlinger>& flinger) : mFlinger(flinger), mUseSoftwareVSync(false), mDebugVsyncEnabled(false) { for (int32_t i=0 ; i<HWC_DISPLAY_TYPES_SUPPORTED ; i++) { mVSyncEvent[i].header.type = DisplayEventReceiver::DISPLAY_EVENT_VSYNC; mVSyncEvent[i].header.id = 0; mVSyncEvent[i].header.timestamp = 0; mVSyncEvent[i].vsync.count = 0;//初始化同步事件 }}复杂的地方在这里:

void MessageQueue::setEventThread(const sp<EventThread>& eventThread){ mEventThread = eventThread; mEvents = eventThread->createEventConnection();//建立连接 mEventTube = mEvents->getDataChannel(); mLooper->addFd(mEventTube->getFd(), 0, ALOOPER_EVENT_INPUT, MessageQueue::cb_eventReceiver, this);// return mReceiveFd;,cb_eventReceiver}分解为一下几步:

1.new Connecton,connection是eventthread的内部类。

sp<EventThread::Connection> EventThread::createEventConnection() const { return new Connection(const_cast<EventThread*>(this));}EventThread::Connection::Connection( const sp<EventThread>& eventThread) : count(-1), mEventThread(eventThread), mChannel(new BitTube()){}

2. new BitTube()本质就是新建了一个Socket本地进程间通信,socketpair即可用读也可以写,其中socket[0]作为了用于读取数据,socket[1]作为用于写入数据

BitTube::BitTube() : mSendFd(-1), mReceiveFd(-1){ int sockets[2]; if (socketpair(AF_UNIX, SOCK_SEQPACKET, 0, sockets) == 0) {//unix本地套接字 int size = SOCKET_BUFFER_SIZE; setsockopt(sockets[0], SOL_SOCKET, SO_SNDBUF, &size, sizeof(size)); setsockopt(sockets[0], SOL_SOCKET, SO_RCVBUF, &size, sizeof(size)); setsockopt(sockets[1], SOL_SOCKET, SO_SNDBUF, &size, sizeof(size)); setsockopt(sockets[1], SOL_SOCKET, SO_RCVBUF, &size, sizeof(size)); fcntl(sockets[0], F_SETFL, O_NONBLOCK); fcntl(sockets[1], F_SETFL, O_NONBLOCK); mReceiveFd = sockets[0]; mSendFd = sockets[1]; } else { mReceiveFd = -errno; ALOGE("BitTube: pipe creation failed (%s)", strerror(-mReceiveFd)); }}

3.获取mChannel变量属于BitTube类

mEventTube = mEvents->getDataChannel();

4.将不同的文件描述符添加到mLooper中

int Looper::addFd(int fd, int ident, int events, ALooper_callbackFunc callback, void* data) { return addFd(fd, ident, events, callback ? new SimpleLooperCallback(callback) : NULL, data);//建立回调函数}fd = mEventTube->getFd(),返回的是之前的mReceived(Socket[0])接收管道。events: ALOOPER_EVENT_INPUT

callback: MessageQueue::cb_eventReceiver回调函数,这里会新的建立一个SimpleLooperCallback对象

继续add调用会将fd加入到epoll轮询里面,同时所有的信息都会添加到一个Request结构体里面,最终将request信息维护到成员mRequests中去。

Request request; request.fd = fd; request.ident = ident; request.callback = callback; request.data = data; struct epoll_event eventItem; memset(& eventItem, 0, sizeof(epoll_event)); // zero out unused members of data field union eventItem.events = epollEvents; eventItem.data.fd = fd; ssize_t requestIndex = mRequests.indexOfKey(fd); if (requestIndex < 0) {//新的句柄 int epollResult = epoll_ctl(mEpollFd, EPOLL_CTL_ADD, fd, & eventItem);//设置epoll参数,添加fd注册 if (epollResult < 0) { ALOGE("Error adding epoll events for fd %d, errno=%d", fd, errno); return -1; } mRequests.add(fd, request);

step4:SF新线程进入threadloop

bool SurfaceFlinger::threadLoop() { waitForEvent();//一个新的线程在等待事件消息 return true;}void SurfaceFlinger::waitForEvent() { mEventQueue.waitMessage();}依次调用流程:mLooper->pollOnce,Looper::pollAll, Looper::pollInner(int timeoutMillis)为止一直处于循环检测状态,消息的监听与处理都是在这里进行的,下面对该函数进行深入分析。

int Looper::pollInner(int timeoutMillis) {#if DEBUG_POLL_AND_WAKE ALOGD("%p ~ pollOnce - waiting: timeoutMillis=%d", this, timeoutMillis);#endif // Adjust the timeout based on when the next message is due. if (timeoutMillis != 0 && mNextMessageUptime != LLONG_MAX) { nsecs_t now = systemTime(SYSTEM_TIME_MONOTONIC); int messageTimeoutMillis = toMillisecondTimeoutDelay(now, mNextMessageUptime); if (messageTimeoutMillis >= 0 && (timeoutMillis < 0 || messageTimeoutMillis < timeoutMillis)) { timeoutMillis = messageTimeoutMillis; }#if DEBUG_POLL_AND_WAKE ALOGD("%p ~ pollOnce - next message in %lldns, adjusted timeout: timeoutMillis=%d", this, mNextMessageUptime - now, timeoutMillis);#endif } // Poll. int result = ALOOPER_POLL_WAKE; mResponses.clear(); mResponseIndex = 0; struct epoll_event eventItems[EPOLL_MAX_EVENTS]; int eventCount = epoll_wait(mEpollFd, eventItems, EPOLL_MAX_EVENTS, timeoutMillis);//epoll等待 // Acquire lock. mLock.lock(); // Check for poll error. if (eventCount < 0) { if (errno == EINTR) { goto Done; } ALOGW("Poll failed with an unexpected error, errno=%d", errno); result = ALOOPER_POLL_ERROR; goto Done; } // Check for poll timeout. if (eventCount == 0) {#if DEBUG_POLL_AND_WAKE ALOGD("%p ~ pollOnce - timeout", this);#endif result = ALOOPER_POLL_TIMEOUT; goto Done; } // Handle all events.#if DEBUG_POLL_AND_WAKE ALOGD("%p ~ pollOnce - handling events from %d fds", this, eventCount);#endif for (int i = 0; i < eventCount; i++) { int fd = eventItems[i].data.fd; uint32_t epollEvents = eventItems[i].events; if (fd == mWakeReadPipeFd) {//只是简单的wake,一般是消息发出 if (epollEvents & EPOLLIN) { awoken(); } else { ALOGW("Ignoring unexpected epoll events 0x%x on wake read pipe.", epollEvents); } } else { ssize_t requestIndex = mRequests.indexOfKey(fd);//事件 if (requestIndex >= 0) { int events = 0; if (epollEvents & EPOLLIN) events |= ALOOPER_EVENT_INPUT; if (epollEvents & EPOLLOUT) events |= ALOOPER_EVENT_OUTPUT; if (epollEvents & EPOLLERR) events |= ALOOPER_EVENT_ERROR; if (epollEvents & EPOLLHUP) events |= ALOOPER_EVENT_HANGUP; pushResponse(events, mRequests.valueAt(requestIndex));//相关请求写入mResponses里面进行请求的处理 } else { ALOGW("Ignoring unexpected epoll events 0x%x on fd %d that is " "no longer registered.", epollEvents, fd); } } }Done: ; // Invoke pending message callbacks.消息的handle处理 mNextMessageUptime = LLONG_MAX; while (mMessageEnvelopes.size() != 0) {//消息包络组里面有信息 nsecs_t now = systemTime(SYSTEM_TIME_MONOTONIC); const MessageEnvelope& messageEnvelope = mMessageEnvelopes.itemAt(0);//提取一个消息包 if (messageEnvelope.uptime <= now) { // Remove the envelope from the list. // We keep a strong reference to the handler until the call to handleMessage // finishes. Then we drop it so that the handler can be deleted *before* // we reacquire our lock. { // obtain handler sp<MessageHandler> handler = messageEnvelope.handler; Message message = messageEnvelope.message; mMessageEnvelopes.removeAt(0); mSendingMessage = true; mLock.unlock();#if DEBUG_POLL_AND_WAKE || DEBUG_CALLBACKS ALOGD("%p ~ pollOnce - sending message: handler=%p, what=%d", this, handler.get(), message.what);#endif handler->handleMessage(message);//MessageHandler消息的handle处理 } // release handler mLock.lock(); mSendingMessage = false; result = ALOOPER_POLL_CALLBACK; } else { // The last message left at the head of the queue determines the next wakeup time. mNextMessageUptime = messageEnvelope.uptime; break; } } // Release lock. mLock.unlock(); // Invoke all response callbacks.,处理请求的回调函数 for (size_t i = 0; i < mResponses.size(); i++) { Response& response = mResponses.editItemAt(i); if (response.request.ident == ALOOPER_POLL_CALLBACK) { int fd = response.request.fd; int events = response.events; void* data = response.request.data;#if DEBUG_POLL_AND_WAKE || DEBUG_CALLBACKS ALOGD("%p ~ pollOnce - invoking fd event callback %p: fd=%d, events=0x%x, data=%p", this, response.request.callback.get(), fd, events, data);#endif int callbackResult = response.request.callback->handleEvent(fd, events, data);//调用addfd的回调函数ALooper_callbackFunc if (callbackResult == 0) { removeFd(fd); } // Clear the callback reference in the response structure promptly because we // will not clear the response vector itself until the next poll. response.request.callback.clear(); result = ALOOPER_POLL_CALLBACK; } } return result;}int Looper::pollAll(int timeoutMillis, int* outFd, int* outEvents, void** outData) { if (timeoutMillis <= 0) { int result; do { result = pollOnce(timeoutMillis, outFd, outEvents, outData); } while (result == ALOOPER_POLL_CALLBACK); return result; } else { nsecs_t endTime = systemTime(SYSTEM_TIME_MONOTONIC) + milliseconds_to_nanoseconds(timeoutMillis); for (;;) { int result = pollOnce(timeoutMillis, outFd, outEvents, outData); if (result != ALOOPER_POLL_CALLBACK) { return result; } nsecs_t now = systemTime(SYSTEM_TIME_MONOTONIC); timeoutMillis = toMillisecondTimeoutDelay(now, endTime); if (timeoutMillis == 0) { return ALOOPER_POLL_TIMEOUT; } } }}1.epoll_wait进入轮询等待有数据可以读取。

2.epoll_wait等打有eventcount产生,开始对其节进行解析,假设现在epoll检测到了数据,则继续执行

3.前面可以知道epoll检测的文件描述符包括Pipe产生的mWakeReadPipeFd,双工通信socketpair的mReceived两个。

4、那么在哪来会触发后者呢,我们要回归到eventthread的threadloop函数,这里是在检测底层硬件的VSYC信号,先来看看他的实现

bool EventThread::threadLoop() { DisplayEventReceiver::Event event; Vector< sp<EventThread::Connection> > signalConnections; signalConnections = waitForEvent(&event);//轮询等待event的发生,一般是硬件的请求 // dispatch events to listeners... const size_t count = signalConnections.size(); for (size_t i=0 ; i<count ; i++) { const sp<Connection>& conn(signalConnections[i]); // now see if we still need to report this event status_t err = conn->postEvent(event);//发送事件,一般的硬件触发了事件的发生 if (err == -EAGAIN || err == -EWOULDBLOCK) { // The destination doesn't accept events anymore, it's probably // full. For now, we just drop the events on the floor. // FIXME: Note that some events cannot be dropped and would have // to be re-sent later. // Right-now we don't have the ability to do this. ALOGW("EventThread: dropping event (%08x) for connection %p", event.header.type, conn.get()); } else if (err < 0) { // handle any other error on the pipe as fatal. the only // reasonable thing to do is to clean-up this connection. // The most common error we'll get here is -EPIPE. removeDisplayEventConnection(signalConnections[i]); } } return true;}首先是要waitForEvent进入事件循环检测,在收到事件信号后,就是对连接信号的解析,这里是获取eventthread内部类Connection来进行事件的传递。依次调用如下:

DisplayEventReceiver::sendEvents(mChannel, &event, 1)――>BitTube::sendObjects(dataChannel, events, count);回到了前面的socket管道通信中来了,继续看

DisplayEventReceiver::sendEvents(mChannel, &event, 1)DisplayEventReceiver::sendEvents

ssize_t BitTube::sendObjects(const sp<BitTube>& tube, void const* events, size_t count, size_t objSize){ ssize_t numObjects = 0; for (size_t i=0 ; i<count ; i++) { const char* vaddr = reinterpret_cast<const char*>(events) + objSize * i; ssize_t size = tube->write(vaddr, objSize); if (size < 0) { // error occurred return size; } else if (size == 0) { // no more space break; } numObjects++; } return numObjects;}这里是将Vsync这个evnet变量进行打包,多个event的话还需要循环进行,然后调用write函数:

ssize_t BitTube::write(void const* vaddr, size_t size){ ssize_t err, len; do { len = ::send(mSendFd, vaddr, size, MSG_DONTWAIT | MSG_NOSIGNAL); err = len < 0 ? errno : 0; } while (err == EINTR); return err == 0 ? len : -err;}很清楚的是调用了socket专属的send函数,而这里的fd正是mSendFd,而epoll_wait那里等待就是mRecieved的读管道口。这样就可以通过进程间通信,触发我们的SF的2号线程epoll_wait进行处理。

下面我们继续回到Looper::pollInner这个复杂的函数内部,在epoll_wait执行之后,主要是先对message进行业务的处理,然后才是对应的event的事件回调。

if (requestIndex >= 0) { int events = 0; if (epollEvents & EPOLLIN) events |= ALOOPER_EVENT_INPUT; if (epollEvents & EPOLLOUT) events |= ALOOPER_EVENT_OUTPUT; if (epollEvents & EPOLLERR) events |= ALOOPER_EVENT_ERROR; if (epollEvents & EPOLLHUP) events |= ALOOPER_EVENT_HANGUP; pushResponse(events, mRequests.valueAt(requestIndex))上述代码完成的是将epoll的事件类型转为ALOOPER自身的类型,这里是事件输入。pushResponse主要是将mRequests中的事件请求从Looper维护的内存里面提取出来,requestIndex = mRequests.indexOfKey(fd)可以看到,这个请求的索引值是通过当前的fd来提取的,而之前保存的时候正是通过fd来关联的。

void Looper::pushResponse(int events, const Request& request) { Response response; response.events = events; response.request = request; mResponses.push(response);}mResponses里面存储着events,request等信息,主要在下面对回调解析时使用。

// Invoke all response callbacks.,处理请求的回调函数 for (size_t i = 0; i < mResponses.size(); i++) { Response& response = mResponses.editItemAt(i); if (response.request.ident == ALOOPER_POLL_CALLBACK) { int fd = response.request.fd; int events = response.events; void* data = response.request.data;#if DEBUG_POLL_AND_WAKE || DEBUG_CALLBACKS ALOGD("%p ~ pollOnce - invoking fd event callback %p: fd=%d, events=0x%x, data=%p", this, response.request.callback.get(), fd, events, data);#endif int callbackResult = response.request.callback->handleEvent(fd, events, data);//调用addfd的回调函数ALooper_callbackFunc if (callbackResult == 0) { removeFd(fd); } // Clear the callback reference in the response structure promptly because we // will not clear the response vector itself until the next poll. response.request.callback.clear(); result = ALOOPER_POLL_CALLBACK; } }在前面addFd处,将SF的EventThread新建的BitTube添加到了mLooper里面,当时的fd设置的request的ident就是ALOOPER_POLL_CALLBACK。接着取出响应response的信息,开始进行准备回调handleEvent().那么这个callback是什么呢?还是要回到addfd里面,可以看到request.callback = callback;这样可以定位到mLooper->addFd(mEventTube->getFd(), 0, ALOOPER_EVENT_INPUT,MessageQueue::cb_eventReceiver, this); 故这里的callback MessageQueue的成员函数cb_eventReceiver,但是他是作为new SimpleLooperCallback(callback)初始化到了一个SimpleLoopeCallback对象中,而该类继承了LooperCallback这个基类,也就是说callback->handleEvent()也就是调用派生类SimpleLooperCallback的handleEvent(),如下:

int SimpleLooperCallback::handleEvent(int fd, int events, void* data) { return mCallback(fd, events, data);}的确,mCallBack才是ALooper_callbackFunc这个函数指针,当初是赋值为了cb_eventReceiver,故回调该函数。

int MessageQueue::cb_eventReceiver(int fd, int events, void* data) { MessageQueue* queue = reinterpret_cast<MessageQueue *>(data); return queue->eventReceiver(fd, events);}而上面的data一就是MessageQueue::setEventThread时传入的this即SurfaceFlinger的mMessageQueue成员,现在开始执行事件的接收,其实就是开始做消息的发送了,也就是进入消息处理机制。

int MessageQueue::eventReceiver(int fd, int events) { ssize_t n; DisplayEventReceiver::Event buffer[8]; while ((n = DisplayEventReceiver::getEvents(mEventTube, buffer, 8)) > 0) {//得到事件的数据 for (int i=0 ; i<n ; i++) { if (buffer[i].header.type == DisplayEventReceiver::DISPLAY_EVENT_VSYNC) {#if INVALIDATE_ON_VSYNC mHandler->dispatchInvalidate();#else mHandler->dispatchRefresh();//刷新#endif break; } } } return 1;}getevents最终是调用recv来获得基于Unix域单连接的socket端发send过来的数据,判断数据的类型是不是显示帧同步事件,可以很容易的看到在EventThread的waitevent中的event事件结构体初始化:

for (int32_t i=0 ; i<HWC_DISPLAY_TYPES_SUPPORTED ; i++) { mVSyncEvent[i].header.type = DisplayEventReceiver::DISPLAY_EVENT_VSYNC; mVSyncEvent[i].header.id = 0; mVSyncEvent[i].header.timestamp = 0; mVSyncEvent[i].vsync.count = 0;//初始化同步事件 }故直接进入mHandler->dispatchRefresh()函数,

void MessageQueue::Handler::dispatchRefresh() { if ((android_atomic_or(eventMaskRefresh, &mEventMask) & eventMaskRefresh) == 0) { mQueue.mLooper->sendMessage(this, Message(MessageQueue::REFRESH)); }}

void Looper::sendMessage(const sp<MessageHandler>& handler, const Message& message) { nsecs_t now = systemTime(SYSTEM_TIME_MONOTONIC); sendMessageAtTime(now, handler, message); //及时发送消息}

void Looper::sendMessageAtTime(nsecs_t uptime, const sp<MessageHandler>& handler, const Message& message) {#if DEBUG_CALLBACKS ALOGD("%p ~ sendMessageAtTime - uptime=%lld, handler=%p, what=%d", this, uptime, handler.get(), message.what);#endif size_t i = 0; { // acquire lock AutoMutex _l(mLock); size_t messageCount = mMessageEnvelopes.size(); while (i < messageCount && uptime >= mMessageEnvelopes.itemAt(i).uptime) { i += 1; } MessageEnvelope messageEnvelope(uptime, handler, message); mMessageEnvelopes.insertAt(messageEnvelope, i, 1);//加入消息包到loop中去 // Optimization: If the Looper is currently sending a message, then we can skip // the call to wake() because the next thing the Looper will do after processing // messages is to decide when the next wakeup time should be. In fact, it does // not even matter whether this code is running on the Looper thread. if (mSendingMessage) { return; } } // release lock // Wake the poll loop only when we enqueue a new message at the head. if (i == 0) { wake();//在消息队列头检测到有一个新的消息后唤醒 }}。sendMessageAtTime这里理解为及时发送消息,那消息是以什么形式存在的呢?其实可以注意到Message message传入的类型为Refresh。 size_t messageCount = mMessageEnvelopes.size();来获取Looper中所存在的消息包的个数,可以看到如果之前looper还有消息没有处理,那么i+=1,直到当前消息放在最后,实现的代码如下,将消息加入到了mMessageEnvelops中去了。

MessageEnvelope messageEnvelope(uptime, handler, message);

mMessageEnvelopes.insertAt(messageEnvelope, i, 1);//加入消息包到loop中去

那么怎么触发消息的处理呢,如果只有当前的一个消息,直接wake()之前的pollInner内的epoll_wai函数,做消息的处理,接着就看pollInner内的消息处理机制吧:

// Invoke pending message callbacks.消息的handle处理 mNextMessageUptime = LLONG_MAX; while (mMessageEnvelopes.size() != 0) {//消息包络组里面有信息 nsecs_t now = systemTime(SYSTEM_TIME_MONOTONIC); const MessageEnvelope& messageEnvelope = mMessageEnvelopes.itemAt(0);//提取一个消息包 if (messageEnvelope.uptime <= now) { // Remove the envelope from the list. // We keep a strong reference to the handler until the call to handleMessage // finishes. Then we drop it so that the handler can be deleted *before* // we reacquire our lock. { // obtain handler sp<MessageHandler> handler = messageEnvelope.handler; Message message = messageEnvelope.message; mMessageEnvelopes.removeAt(0); mSendingMessage = true; mLock.unlock();#if DEBUG_POLL_AND_WAKE || DEBUG_CALLBACKS ALOGD("%p ~ pollOnce - sending message: handler=%p, what=%d", this, handler.get(), message.what);#endif handler->handleMessage(message);//MessageHandler消息的handle处理 } // release handler mLock.lock(); mSendingMessage = false; result = ALOOPER_POLL_CALLBACK; } else { // The last message left at the head of the queue determines the next wakeup time. mNextMessageUptime = messageEnvelope.uptime; break; } }核心的过程解析messageEnvelope消息包,取出对应的消息以及消息处理的handle,进行处理,那么立即的handle是什么呢?回归到mHandler->dispatchRefresh()――》MessageQueue::Handler::dispatchRefresh()里面可以看到 mQueue.mLooper->sendMessage(this, Message(MessageQueue::REFRESH));,这个handle就是mHandle啦,故来看MessageQueue的内部Handle的成员函数handleMessage,很高兴的是,果然与之前设置的message.what结合在一起了。最终把消息的处理权还是提交给了mFlinger

void MessageQueue::Handler::handleMessage(const Message& message) { switch (message.what) { case INVALIDATE: android_atomic_and(~eventMaskInvalidate, &mEventMask); mQueue.mFlinger->onMessageReceived(message.what); break; case REFRESH: android_atomic_and(~eventMaskRefresh, &mEventMask); mQueue.mFlinger->onMessageReceived(message.what);//线程接收到了消息后处理 break; }}onMessageReceived――>handleMessageRefresh处理屏幕刷新这个消息,主要调用composer来完成。

void SurfaceFlinger::handleMessageRefresh() {//处理layer的刷新,实际是调用SF绘图 ATRACE_CALL(); preComposition(); rebuildLayerStacks(); setUpHWComposer(); doDebugFlashRegions(); doComposition(); postComposition();}

通过以上内容就走完了Surface的屏幕刷新事件提交以及事件处理,消息触发,以及消息的处理。最终还是回归到SurfaceFlinger来完成最终的消息处理。本文最终将SF的消息和事件处理机制总结成以下的流程图,加速理解。蓝色核心框图是SurfaceFlinger的主要业务和服务的处理线程。