本文参考《Android系统源代码情景分析》,作者罗升阳

一、测试代码:

~/Android/external/binder/server

----FregServer.cpp

~/Android/external/binder/common

----IFregService.cpp

----IFregService.h

~/Android/external/binder/client

----FregClient.cpp

Binder库(libbinder)代码:

~/Android/frameworks/base/libs/binder

----BpBinder.cpp

----Parcel.cpp

----ProcessState.cpp

----Binder.cpp

----IInterface.cpp

----IPCThreadState.cpp

----IServiceManager.cpp

----Static.cpp

~/Android/frameworks/base/include/binder

----Binder.h

----BpBinder.h

----IInterface.h

----IPCThreadState.h

----IServiceManager.h

----IBinder.h

----Parcel.h

----ProcessState.h

驱动层代码:

~/Android//kernel/goldfish/drivers/staging/android

----binder.c

----binder.h

二、源码分析

上一篇文章Android Binder进程间通信---注册Service组件---封装进程间通信数据http://blog.csdn.net/jltxgcy/article/details/26059215,我们执行完了addService中封装数据部分代码,如下:

~/Android/frameworks/base/libs/binder

----IServiceManager.cpp

class BpServiceManager : public BpInterface<IServiceManager>{public: ......... virtual status_t addService(const String16& name, const sp<IBinder>& service) { Parcel data, reply; data.writeInterfaceToken(IServiceManager::getInterfaceDescriptor());//写入一个Binder进程间通信请求头 data.writeString16(name);//写入将要注册的Service组件的名称 data.writeStrongBinder(service);//将要注册的Service组件封装成一个flat_binder_object结构体,写入data status_t err = remote()->transact(ADD_SERVICE_TRANSACTION, data, &reply);//remote为BpBinder对象 return err == NO_ERROR ? reply.readExceptionCode() : err; } ..........}; 接下来调用内部的一个Binder代理对象的成员函数transact发送一个ADD_SERVICE_TRANSACTION命令协议。remote()获取BpBinder对象,调用它的成员函数transact函数,实现如下:

~/Android/frameworks/base/libs/binder

----BpBinder.cpp

status_t BpBinder::transact( uint32_t code, const Parcel& data, Parcel* reply, uint32_t flags)//注意data是一个引用,reply是一个指针{ // Once a binder has died, it will never come back to life. if (mAlive) { status_t status = IPCThreadState::self()->transact( mHandle, code, data, reply, flags);//每个参数的介绍在下面 if (status == DEAD_OBJECT) mAlive = 0; return status; } return DEAD_OBJECT;} 目前mHandle为0,code为ADD_SERVICE_TRANSACTION,data包含了要传递给Binder驱动程序的进程间通信数据;第三个参数reply是一个输出参数,用来保存进程间通信结果,第四个参数flags用来描述这是一个同步的进程间通信请求,还是一个异步的进程间通信请求,它是一个默认参数,默认值为0,表示这是一个同步的进程请求。调用上文http://blog.csdn.net/jltxgcy/article/details/25953361已经创建的IPCThreadState对象的成员函数transact,实现如下:

~/Android/frameworks/base/libs/binder

----IPCThreadState.cpp

status_t IPCThreadState::transact(int32_t handle, uint32_t code, const Parcel& data, Parcel* reply, uint32_t flags){ status_t err = data.errorCheck(); flags |= TF_ACCEPT_FDS;//flags等于0|TF_ACCEPT_FDS ....... if (err == NO_ERROR) { .......... err = writeTransactionData(BC_TRANSACTION, flags, handle, code, data, NULL);//将data的内容写入到一个binder_transaction_data结构体中 } ........ if ((flags & TF_ONE_WAY) == 0) {//最后一位为0,按位与TF_ONE_WAY等于0,表示同步的进程间通信请求 .......... if (reply) {//reply不为空,是一个指针 err = waitForResponse(reply); } else { ......... } .......... } else { .......... } return err;} 其中, TF_ACCEPT_FDS,TF_ONE_WAY定义在一个枚举中。实现如下:~/Android/frameworks/base/include/binder

----binder.h

enum transaction_flags { TF_ONE_WAY = 0x01, TF_ROOT_OBJECT = 0x04, TF_STATUS_CODE = 0x08, TF_ACCEPT_FDS = 0x10,};首先检查Parcel对象中的进程间通信数据是否正确,然后将参数flags的TF_ACCEPT_FDS位设置为1,表示允许Server进程在返回结果中携带文件描述符。如果Parcel对象data中的进程间通信数据没有问题,那么就会调用成员函数writeTransactionData将它的内容写入到一个binder_transaction_data结构体中。接着判断flags的TF_ONE_WAY位是否等于0。如果是,那么就说明这是一个同步的进程间通信请求,这时候如果用来保存通信结果的Parcel对象reply不等于NULL,那么就调用成员函数waitForResponse函数。

我们先来分析writeTransactionData函数,实现如下:

~/Android/frameworks/base/include/binder

----IPCThreadState.cpp

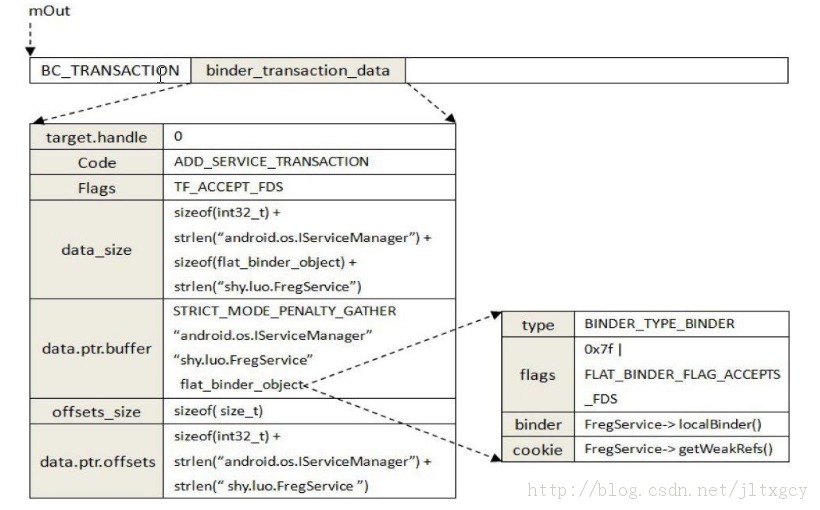

status_t IPCThreadState::writeTransactionData(int32_t cmd, uint32_t binderFlags,//此时cmd为BC_TRANSACTION,binderFlags为0|TF_ACCEPT_FDS int32_t handle, uint32_t code, const Parcel& data, status_t* statusBuffer)//handle为0,code为ADD_SERVICE_TRANSACTION,data包含了包含了要传递给Binder驱动程序的进程间通信数据,statusBuffer为NULL{ binder_transaction_data tr; tr.target.handle = handle;//0 tr.code = code;//ADD_SERVICE_TRANSACTION tr.flags = binderFlags;//0|TF_ACCEPT_FDS const status_t err = data.errorCheck(); if (err == NO_ERROR) { tr.data_size = data.ipcDataSize();//数据缓冲区大小 tr.data.ptr.buffer = data.ipcData();//数据缓冲区的起始位置 tr.offsets_size = data.ipcObjectsCount()*sizeof(size_t);//偏移数组大小 tr.data.ptr.offsets = data.ipcObjects();//偏移数组起始位置 } else if (statusBuffer) { ........ } else { ........ } mOut.writeInt32(cmd);//BC_TRANSACTION mOut.write(&tr, sizeof(tr)); return NO_ERROR;} 其中binder_transaction_data结构体,实现如下:struct binder_transaction_data { union { size_t handle; void *ptr; } target; void *cookie; unsigned int code; unsigned int flags; pid_t sender_pid; uid_t sender_euid; size_t data_size; size_t offsets_size; union { struct { const void *buffer; const void *offsets; }ptr; uint8_t buf[8]; } data;}; 执行完writeTransactionData,此时命令协议缓冲区mOut的内存布局如下图:

注意此图有错误,flat_binder_object中cookie为BBinder类指针,即binder本地对象指针。binder为本地对象弱引用计数的地址。

执行完writeTransactionData函数,该执行waitForResponse函数了,实现如下:

~/Android/frameworks/base/include/binder

----IPCThreadState.cpp

status_t IPCThreadState::waitForResponse(Parcel *reply, status_t *acquireResult){ int32_t cmd; int32_t err; while (1) { if ((err=talkWithDriver()) < NO_ERROR) break; ..... } .......} 这个函数通过一个while循环不断地调用成员函数talkWithDriver来与Binder驱动程序进行交互,以便可以将前面准备好的BC_TRANSACTION命令协议发送给Binder驱动程序处理,并等待Binder驱动程序将进程间通信结果返回来。talkWithDriver函数实现如下:

~/Android/frameworks/base/include/binder

----IPCThreadState.cpp

status_t IPCThreadState::talkWithDriver(bool doReceive){ LOG_ASSERT(mProcess->mDriverFD >= 0, "Binder driver is not opened"); binder_write_read bwr; // Is the read buffer empty? const bool needRead = mIn.dataPosition() >= mIn.dataSize();//needRead为1,表示有需要读的数据 // We don't want to write anything if we are still reading // from data left in the input buffer and the caller // has requested to read the next data. const size_t outAvail = (!doReceive || needRead) ? mOut.dataSize() : 0;//doReceive为true表示,只接受Binder驱动程序发送给该进程的返回协议。默认为true。doReceive为false,即不只接受Binder驱动程序发送给该进程的返回协议,或者有需要读的数据,那么outAvail就不为0 bwr.write_size = outAvail;//要写入的数据大小,为mOut的实际大小 bwr.write_buffer = (long unsigned int)mOut.data();//要写入的数据起始位置 // This is what we'll read. if (doReceive && needRead) { bwr.read_size = mIn.dataCapacity();//读入数据的大小,为它的所有容量 bwr.read_buffer = (long unsigned int)mIn.data();//读入数据的起始位置 } else { bwr.read_size = 0; } ......... // Return immediately if there is nothing to do. if ((bwr.write_size == 0) && (bwr.read_size == 0)) return NO_ERROR;//如果两者都为0,就不用继续执行驱动程序了 bwr.write_consumed = 0;//消费清0 bwr.read_consumed = 0;//消费清0 status_t err; do { .........#if defined(HAVE_ANDROID_OS) if (ioctl(mProcess->mDriverFD, BINDER_WRITE_READ, &bwr) >= 0)//IPCThreadState在构造函数初始化时,初始化了mProcess err = NO_ERROR; else err = -errno;#else err = INVALID_OPERATION;#endif IF_LOG_COMMANDS() { alog << "Finished read/write, write size = " << mOut.dataSize() << endl; } } while (err == -EINTR); ........ if (err >= NO_ERROR) { if (bwr.write_consumed > 0) { if (bwr.write_consumed < (ssize_t)mOut.dataSize()) mOut.remove(0, bwr.write_consumed); else mOut.setDataSize(0); } if (bwr.read_consumed > 0) { mIn.setDataSize(bwr.read_consumed); mIn.setDataPosition(0); } ......... return NO_ERROR; } return err;} 在IPCThreadState类内部,除了使用缓冲区mOut来保存即将要发送给Binder驱动程序的命令协议外,还使用缓冲区mIn来保存那些从Binder驱动程序接受到的返回协议。通过IPCThreadState类的mIn,mOut设置一个局部变量binder_write_read结构体,最后调用了ioctl映射到Binder驱动程序去执行。

~/Android/kernel/goldfish/drivers/staging/android

----binder.c

static long binder_ioctl(struct file *filp, unsigned int cmd, unsigned long arg){ int ret; struct binder_proc *proc = filp->private_data; struct binder_thread *thread; unsigned int size = _IOC_SIZE(cmd); void __user *ubuf = (void __user *)arg;//上面传递的过来的局部变量binder_write_read结构体的地址 ......... mutex_lock(&binder_lock); thread = binder_get_thread(proc);//由于在初始化Process过程中,调用了open_driver,在open_driver中也调用了ioctl,所以looper为0 if (thread == NULL) { ret = -ENOMEM; goto err; } switch (cmd) {//cmd为上面传递过来的BINDER_WRITE_READ case BINDER_WRITE_READ: { struct binder_write_read bwr; if (size != sizeof(struct binder_write_read)) { ret = -EINVAL; goto err; } if (copy_from_user(&bwr, ubuf, sizeof(bwr))) {//从用户空间传进来的一个binder_write_read结构体拷贝出来,并且保存在变量bwr中,里面有数据地址,数据总大小,数据现在消费了多少 ret = -EFAULT; goto err; } ......... if (bwr.write_size > 0) {//bwr.write_size大于0,执行这里 ret = binder_thread_write(proc, thread, (void __user *)bwr.write_buffer, bwr.write_size, &bwr.write_consumed); if (ret < 0) { bwr.read_consumed = 0; if (copy_to_user(ubuf, &bwr, sizeof(bwr))) ret = -EFAULT; goto err; } } if (bwr.read_size > 0) {//bwr.read_size大于0,执行这里 ret = binder_thread_read(proc, thread, (void __user *)bwr.read_buffer, bwr.read_size, &bwr.read_consumed, filp->f_flags & O_NONBLOCK); if (!list_empty(&proc->todo)) wake_up_interruptible(&proc->wait); if (ret < 0) { if (copy_to_user(ubuf, &bwr, sizeof(bwr))) ret = -EFAULT; goto err; } } ........... if (copy_to_user(ubuf, &bwr, sizeof(bwr))) {//将结果返回用户空间bwr ret = -EFAULT; goto err; } break; } .......... ret = 0;err: if (thread) thread->looper &= ~BINDER_LOOPER_STATE_NEED_RETURN;//looper还是0 mutex_unlock(&binder_lock); ........... return ret;}由于write_size大于0,read_size大于0,所以首先会执行binder_thread_write,然后执行binder_thread_read。

binder_thread_write函数实现如下:

~/Android/kernel/goldfish/drivers/staging/android

----binder.c

intbinder_thread_write(struct binder_proc *proc, struct binder_thread *thread, void __user *buffer, int size, signed long *consumed)//注意consumed为指针{ uint32_t cmd; void __user *ptr = buffer + *consumed;//起始位置 void __user *end = buffer + size;//末尾位置 while (ptr < end && thread->return_error == BR_OK) { if (get_user(cmd, (uint32_t __user *)ptr))//cmd为BC_TRANSACTION return -EFAULT; ptr += sizeof(uint32_t);//取出命令后,ptr自增长 ...... switch (cmd) { ...... case BC_TRANSACTION: case BC_REPLY: { struct binder_transaction_data tr; if (copy_from_user(&tr, ptr, sizeof(tr)))//将进程间通信数据读取出来,并且保存在binder_transation_data结构体tr中 return -EFAULT; ptr += sizeof(tr); binder_transaction(proc, thread, &tr, cmd == BC_REPLY);//调用函数binder_transaction来处理进程发送给它的BC_TRANSACTION命令协议 break; } ....... *consumed = ptr - buffer;//consumed为传入的参数size,因此数据已经全部使用完毕 } return 0;} 从前面的调用过程可以知道,这个输出缓冲区包含了一个BC_TRANSACTION命令协议,接着就将BC_TRANSACTION命令协议后面所跟的进程间通信数据读取出来,并且保存在binder_transation_data结构体tr中。最后调用函数binder_transaction来处理进程发送给它的BC_TRANSACTION命令协议。函数binder_transaction负责处理命令协议BC_TRANSACTION和命令BC_REPLY,它的实现比较长,我们来分段阅读:

~/Android/kernel/goldfish/drivers/staging/android

----binder.c

static voidbinder_transaction(struct binder_proc *proc, struct binder_thread *thread, struct binder_transaction_data *tr, int reply){ struct binder_transaction *t; struct binder_work *tcomplete; size_t *offp, *off_end; struct binder_proc *target_proc; struct binder_thread *target_thread = NULL; struct binder_node *target_node = NULL; struct list_head *target_list; wait_queue_head_t *target_wait; struct binder_transaction *in_reply_to = NULL; ......... if (reply) {//为0 ......... } else { if (tr->target.handle) {//为0 struct binder_ref *ref; ref = binder_get_ref(proc, tr->target.handle); ....... target_node = ref->node; } else { target_node = binder_context_mgr_node;//Service Manager的Binder实体对象binder_context_mgr_node ........ } ........ target_proc = target_node->proc;//找到了目标进程 ........ if (!(tr->flags & TF_ONE_WAY) && thread->transaction_stack) {//目前thread->transaction_stack为NULL,所以暂时不执行这里 struct binder_transaction *tmp; tmp = thread->transaction_stack; ........ while (tmp) { if (tmp->from && tmp->from->proc == target_proc) target_thread = tmp->from; tmp = tmp->from_parent; } } } if (target_thread) {//target_thread为NULL ........ target_list = &target_thread->todo; target_wait = &target_thread->wait; } else { target_list = &target_proc->todo;//target_list和target_wait分别指向该目标进程target_proc的todo队列和wait等待队列 target_wait = &target_proc->wait; } 找到了target_node,target_proc,初始化了target_list和target_wait。函数继续执行:

t = kzalloc(sizeof(*t), GFP_KERNEL);//分配了一个binder_transaction结构体t ...... tcomplete = kzalloc(sizeof(*tcomplete), GFP_KERNEL);//分配了一个binder_work结构体tcomplete ...... if (!reply && !(tr->flags & TF_ONE_WAY))//如果正在处理BC_TRANSATION命令协议,而且是一个同步的进程间通信请求 t->from = thread;//from指向源线程thread,以便目标进程target_proc或者目标线程target_thread处理完该进程间通信请求之后,能够找回发送该进程间通信请求的线程,最终将进程间通信结果返回给它。 else t->from = NULL; t->sender_euid = proc->tsk->cred->euid; t->to_proc = target_proc;//目标进程 t->to_thread = target_thread;//目标线程 t->code = tr->code;//ADD_SERVICE_TRANCATION t->flags = tr->flags;//TF_ACCEPTS_FDS t->priority = task_nice(current); t->buffer = binder_alloc_buf(target_proc, tr->data_size, tr->offsets_size, !reply && (t->flags & TF_ONE_WAY));//分配了binder_buffer结构体 ....... t->buffer->allow_user_free = 0;//不允许释放 ....... t->buffer->transaction = t; t->buffer->target_node = target_node;//binder_context_mgr_node if (target_node) binder_inc_node(target_node, 1, 0, NULL);//增加目标Binder实体对象的强引用计数 offp = (size_t *)(t->buffer->data + ALIGN(tr->data_size, sizeof(void *)));//偏移数组在data中起始位置,位于数据缓冲区之后 if (copy_from_user(t->buffer->data, tr->data.ptr.buffer, tr->data_size)) {//数据缓冲区拷贝到data中 ....... goto err_copy_data_failed; } if (copy_from_user(offp, tr->data.ptr.offsets, tr->offsets_size)) {//偏移数组拷贝到data中,偏移数组位于数据缓冲区之后 ....... goto err_copy_data_failed; } ........ off_end = (void *)offp + tr->offsets_size;//偏移数组在data中的结束位置 binder_transaction结构体实现如下:~/Android/kernel/goldfish/drivers/staging/android

----binder.c

struct binder_transaction { int debug_id; struct binder_work work; struct binder_thread *from; struct binder_transaction *from_parent; struct binder_proc *to_proc; struct binder_thread *to_thread; struct binder_transaction *to_parent; unsigned need_reply : 1; /*unsigned is_dead : 1;*/ /* not used at the moment */ struct binder_buffer *buffer; unsigned int code; unsigned int flags; long priority; long saved_priority; uid_t sender_euid;}; 其中,binder_buffer结构体实现如下:~/Android/kernel/goldfish/drivers/staging/android

----binder.c

struct binder_buffer { struct list_head entry; /* free and allocated entries by addesss */ struct rb_node rb_node; /* free entry by size or allocated entry */ /* by address */ unsigned free : 1; unsigned allow_user_free : 1; unsigned async_transaction : 1; unsigned debug_id : 29; struct binder_transaction *transaction; struct binder_node *target_node; size_t data_size; size_t offsets_size; uint8_t data[0];};首先分配了一个binder_transaction结构体t,然后初始化各参数。

函数binder_transaction接着执行:

for (; offp < off_end; offp++) { struct flat_binder_object *fp; ....... fp = (struct flat_binder_object *)(t->buffer->data + *offp);//取得flat_binder_object结构体 switch (fp->type) {//BINDER_TYPE_BINDER case BINDER_TYPE_BINDER: case BINDER_TYPE_WEAK_BINDER: { struct binder_ref *ref; struct binder_node *node = binder_get_node(proc, fp->binder);//第一次取得为NULL if (node == NULL) {//执行这里 node = binder_new_node(proc, fp->binder, fp->cookie); if (node == NULL) { return_error = BR_FAILED_REPLY; goto err_binder_new_node_failed; } node->min_priority = fp->flags & FLAT_BINDER_FLAG_PRIORITY_MASK; node->accept_fds = !!(fp->flags & FLAT_BINDER_FLAG_ACCEPTS_FDS);//accept_fds为1 } ........ ref = binder_get_ref_for_node(target_proc, node); ........ if (fp->type == BINDER_TYPE_BINDER) fp->type = BINDER_TYPE_HANDLE;//type变成了HANDLE else fp->type = BINDER_TYPE_WEAK_HANDLE; fp->handle = ref->desc;//由于上面type变成了HANDLE,所以这里也设置handle binder_inc_ref(ref, fp->type == BINDER_TYPE_HANDLE, &thread->todo);//增加引用计数 ....... } break; ....... } 由于调用函数binder_get_node无法获得一个引用了它的Binder实体对象,所以调用函数binder_new_node为它创建一个Binder实体对象node。binder_new_node实现如下:~/Android/kernel/goldfish/drivers/staging/android

----binder.c

static struct binder_node *binder_new_node(struct binder_proc *proc, void __user *ptr, void __user *cookie){ struct rb_node **p = &proc->nodes.rb_node; struct rb_node *parent = NULL; struct binder_node *node; while (*p) {//根据node的ptr,来查找是否已经分配了binder_node结构体 parent = *p; node = rb_entry(parent, struct binder_node, rb_node); if (ptr < node->ptr) p = &(*p)->rb_left; else if (ptr > node->ptr) p = &(*p)->rb_right; else return NULL; } node = kzalloc(sizeof(*node), GFP_KERNEL);//如果没有找到,分配binder_node结构体 if (node == NULL) return NULL; binder_stats.obj_created[BINDER_STAT_NODE]++; rb_link_node(&node->rb_node, parent, p);//根据ptr将node->rb_node插入proc->nodes中 rb_insert_color(&node->rb_node, &proc->nodes); node->debug_id = ++binder_last_id;//初始化各个变量 node->proc = proc; node->ptr = ptr;//弱引用计数地址 node->cookie = cookie;//BBinder(Binder本地对象地址) node->work.type = BINDER_WORK_NODE; INIT_LIST_HEAD(&node->work.entry); INIT_LIST_HEAD(&node->async_todo); if (binder_debug_mask & BINDER_DEBUG_INTERNAL_REFS) printk(KERN_INFO "binder: %d:%d node %d u%p c%p created\n", proc->pid, current->pid, node->debug_id, node->ptr, node->cookie); return node;} 返回for循环,接下来执行,binder_get_ref_for_node,实现如下:~/Android/kernel/goldfish/drivers/staging/android

----binder.c

static struct binder_ref *binder_get_ref_for_node(struct binder_proc *proc, struct binder_node *node){ struct rb_node *n; struct rb_node **p = &proc->refs_by_node.rb_node; struct rb_node *parent = NULL; struct binder_ref *ref, *new_ref; while (*p) {//根据node,在proc->refs_by_node中来查找是否已经分配了binder_ref结构体 parent = *p; ref = rb_entry(parent, struct binder_ref, rb_node_node); if (node < ref->node) p = &(*p)->rb_left; else if (node > ref->node) p = &(*p)->rb_right; else return ref; } new_ref = kzalloc(sizeof(*ref), GFP_KERNEL);//如果没有找到,分配binder_ref结构体 if (new_ref == NULL) return NULL; binder_stats.obj_created[BINDER_STAT_REF]++; ........ new_ref->proc = proc; new_ref->node = node;//刚刚创建的node rb_link_node(&new_ref->rb_node_node, parent, p);////将new_ref->rb_node_node插入proc->refs_by_node中 rb_insert_color(&new_ref->rb_node_node, &proc->refs_by_node); new_ref->desc = (node == binder_context_mgr_node) ? 0 : 1;//为1 for (n = rb_first(&proc->refs_by_desc); n != NULL; n = rb_next(n)) { ref = rb_entry(n, struct binder_ref, rb_node_desc); if (ref->desc > new_ref->desc) break; new_ref->desc = ref->desc + 1; } p = &proc->refs_by_desc.rb_node; while (*p) { parent = *p; ref = rb_entry(parent, struct binder_ref, rb_node_desc); if (new_ref->desc < ref->desc) p = &(*p)->rb_left; else if (new_ref->desc > ref->desc) p = &(*p)->rb_right; else BUG(); } rb_link_node(&new_ref->rb_node_desc, parent, p);//将new_ref->rb_node_desc插入到proc->refs_by_desc中 rb_insert_color(&new_ref->rb_node_desc, &proc->refs_by_desc); if (node) { hlist_add_head(&new_ref->node_entry, &node->refs); ...... } else { ...... } return new_ref;} 获取了Binder引用对象后,重新设置了flat_binder_object结构体的type为BINDER_TYPE_HANDLE(原来为BINDER_TYPE_BINDER),所以设置fp->handle(原来为fp->binder)为refs->decs。函数接着执行:

if (reply) { ..... } else if (!(t->flags & TF_ONE_WAY)) { BUG_ON(t->buffer->async_transaction != 0); t->need_reply = 1;//need_reply为1 t->from_parent = thread->transaction_stack; thread->transaction_stack = t; } else { BUG_ON(target_node == NULL); BUG_ON(t->buffer->async_transaction != 1); if (target_node->has_async_transaction) { target_list = &target_node->async_todo; target_wait = NULL; } else target_node->has_async_transaction = 1; } t->work.type = BINDER_WORK_TRANSACTION; list_add_tail(&t->work.entry, target_list);//加入到目标进程的todo tcomplete->type = BINDER_WORK_TRANSACTION_COMPLETE; list_add_tail(&tcomplete->entry, &thread->todo);//加入到本线程的todo if (target_wait) wake_up_interruptible(target_wait);//唤醒目标进程 return; 我们假设本线程继续执行,执行完毕后再执行被唤醒的目标进程。返回到binder_ioctl,继续执行binder_thread_read,实现如下:

static intbinder_thread_read(struct binder_proc *proc, struct binder_thread *thread, void __user *buffer, int size, signed long *consumed, int non_block){ void __user *ptr = buffer + *consumed; void __user *end = buffer + size; int ret = 0; int wait_for_proc_work; if (*consumed == 0) { if (put_user(BR_NOOP, (uint32_t __user *)ptr)) return -EFAULT; ptr += sizeof(uint32_t); }retry: wait_for_proc_work = thread->transaction_stack == NULL && list_empty(&thread->todo);//wait_for_proc_work为0 ....... thread->looper |= BINDER_LOOPER_STATE_WAITING;//looper为BINDER_LOOPER_STATE_WAITING if (wait_for_proc_work) proc->ready_threads++; mutex_unlock(&binder_lock); if (wait_for_proc_work) { ........ } else { if (non_block) { if (!binder_has_thread_work(thread)) ret = -EAGAIN; } else//执行这里 ret = wait_event_interruptible(thread->wait, binder_has_thread_work(thread));//由于thread上有数据,那么继续执行,不会睡眠等待 } mutex_lock(&binder_lock); if (wait_for_proc_work) proc->ready_threads--; thread->looper &= ~BINDER_LOOPER_STATE_WAITING;//looper为0 if (ret) return ret; while (1) { uint32_t cmd; struct binder_transaction_data tr; struct binder_work *w; struct binder_transaction *t = NULL; if (!list_empty(&thread->todo)) w = list_first_entry(&thread->todo, struct binder_work, entry);//将线程thread的todo队列中类型为BINDER_WORK_TRANSACTION_COMPLETE的工作项取出来 else if (!list_empty(&proc->todo) && wait_for_proc_work) w = list_first_entry(&proc->todo, struct binder_work, entry); else { if (ptr - buffer == 4 && !(thread->looper & BINDER_LOOPER_STATE_NEED_RETURN)) /* no data added */ goto retry; break; } ........ switch (w->type) {//刚取出来,类型为BINDER_WORK_TRANSACTION_COMPLETE ....... case BINDER_WORK_TRANSACTION_COMPLETE: { cmd = BR_TRANSACTION_COMPLETE; if (put_user(cmd, (uint32_t __user *)ptr))//将一个BR_TRANSACTION_COMPLETE返回协议写入到用户提供的缓冲区。 return -EFAULT; ptr += sizeof(uint32_t); ....... list_del(&w->entry);//删除todo上的工作项 kfree(w);//释放结构体 ....... } break; ........ *consumed = ptr - buffer;//消耗的大小 ........... return 0;} 首先,将线程thread的todo队列中类型为BINDER_WORK_TRANSACTION_COMPLETE的工作项取出来,将一个BR_TRANSACTION_COMPLETE返回协议写入到用户提供的缓冲区。函数binder_thread_read执行完成之后,就返回到函数binder_ioctl中,然后再返回到IPCThreadState类的成员函数talkWithDriver中,在此函数中,还有一段代码要执行:

talkWithDriver函数实现如下:

~/Android/frameworks/base/include/binder

----IPCThreadState.cpp

if (err >= NO_ERROR) { if (bwr.write_consumed > 0) { if (bwr.write_consumed < (ssize_t)mOut.dataSize()) mOut.remove(0, bwr.write_consumed);//mOut中移除已经写过的数据 else mOut.setDataSize(0); } if (bwr.read_consumed > 0) { mIn.setDataSize(bwr.read_consumed);//mIn中设置了读取数据的大小,即BR_TRANSACTION_COMPLETE大小 mIn.setDataPosition(0);//mIn中设置了读取数据的位置,为0,指向了BR_TRANSACTION_COMPLETE开头 } ......... return NO_ERROR; } return err; 最后,又返回到IPCThreadState类的成员函数waitForResponse,实现如下: ~/Android/frameworks/base/include/binder

----IPCThreadState.cpp

status_t IPCThreadState::waitForResponse(Parcel *reply, status_t *acquireResult){ int32_t cmd; int32_t err; while (1) { if ((err=talkWithDriver()) < NO_ERROR) break; err = mIn.errorCheck(); if (err < NO_ERROR) break; if (mIn.dataAvail() == 0) continue; cmd = mIn.readInt32();//读取了BR_TRANSACTION_COMPLETE协议,mIn中就没有数据了 ..... switch (cmd) { case BR_TRANSACTION_COMPLETE: if (!reply && !acquireResult) goto finish;//reply不为NULL break; ..... } ........ return err;} 继续往下执行,读取的cmd为BR_TRANSACTION_COMPLETE,执行switch,break跳出switch函数,继续执行while循环,又开始执行talkWithDriver,由于IPCThreadState类内部的命令协议缓冲区mOut中的命令协议,以及返回协议缓冲区mIn中的返回协议都已经处理完成了,因此,当它再次通过IO控制命令BINDER_WRITE_READ进入到Binder驱动程序binder_ioctl中时,就会调用函数binder_thread_read睡眠等待目标进程将上次发出的进程间通信请求的结果返回来。睡眠在这句代码上。

ret = wait_event_interruptible(thread->wait, binder_has_thread_work(thread));