�������ߣ�Tyan

���ͣ�noahsnail.com | CSDN | ����

���������߷������Ľ�Ϊѧϰ��������Ȩ����ϵ����ɾ�����ģ�лл��

�������Ļ��ܣ�https://github.com/SnailTyan/deep-learning-papers-translation

Going Deeper with Convolutions

Abstract

We propose a deep convolutional neural network architecture codenamed Inception that achieves the new state of the art for classification and detection in the ImageNet Large-Scale Visual Recognition Challenge 2014 (ILSVRC14). The main hallmark of this architecture is the improved utilization of the computing resources inside the network. By a carefully crafted design, we increased the depth and width of the network while keeping the computational budget constant. To optimize quality, the architectural decisions were based on the Hebbian principle and the intuition of multi-scale processing. One particular incarnation used in our submission for ILSVRC14 is called GoogLeNet, a 22 layers deep network, the quality of which is assessed in the context of classification and detection.

������ImageNet���ģ�Ӿ�ʶ����ս��2014��ILSVRC14���������һ�ִ���ΪInception����Ⱦ���������ṹ�����ڷ���ͼ����ȡ�����µ���ý��������ܹ�����Ҫ�ص�������������ڲ�������Դ�������ʡ�ͨ�����ĵ��ֹ���ƣ�������������������Ⱥ�ȵ�ͬʱ�����˼���Ԥ�㲻�䡣Ϊ���Ż��������ܹ�������Ժղ����ۺͶ�߶ȴ���ֱ��Ϊ������������ILSVRC14�ύ��Ӧ�õ�һ����������ΪGoogLeNet��һ��22���������磬�������ڷ���ͼ��ı����½�����������

1. Introduction

In the last three years, our object classification and detection capabilities have dramatically improved due to advances in deep learning and convolutional networks [10]. One encouraging news is that most of this progress is not just the result of more powerful hardware, larger datasets and bigger models, but mainly a consequence of new ideas, algorithms and improved network architectures. No new data sources were used, for example, by the top entries in the ILSVRC 2014 competition besides the classification dataset of the same competition for detection purposes. Our GoogLeNet submission to ILSVRC 2014 actually uses 12 times fewer parameters than the winning architecture of Krizhevsky et al [9] from two years ago, while being significantly more accurate. On the object detection front, the biggest gains have not come from naive application of bigger and bigger deep networks, but from the synergy of deep architectures and classical computer vision, like the R-CNN algorithm by Girshick et al [6].

1. ����

��ȥ�����У��������ѧϰ�;�������ķ�չ[10]�����ǵ�Ŀ�����ͼ�������õ���������ߡ�һ�����˹������Ϣ�ǣ��ֵĽ����������Ǹ�ǿ��Ӳ�����������ݼ�������ģ�͵Ľ��������Ҫ���µ��뷨���㷨������ṹ�Ľ��Ľ�������磬ILSVRC 2014�������ǰ������������ڼ��Ŀ�ĵķ������ݼ�֮�⣬û��ʹ���µ�������Դ��������ILSVRC 2014�е�GoogLeNet�ύʵ��ʹ�õIJ���ֻ������ǰKrizhevsky����[9]��ʤ�ṹ������1/12����������Ը�ȷ����Ŀ����ǰ�أ������ջ���������Խ��Խ����������ļ�Ӧ�ã�������������ȼܹ��;��������Ӿ���Эͬ����Girshick����[6]��R-CNN�㷨������

Another notable factor is that with the ongoing traction of mobile and embedded computing, the efficiency of our algorithms ���� especially their power and memory use ���� gains importance. It is noteworthy that the considerations leading to the design of the deep architecture presented in this paper included this factor rather than having a sheer fixation on accuracy numbers. For most of the experiments, the models were designed to keep a computational budget of 1.5 billion multiply-adds at inference time, so that the they do not end up to be a purely academic curiosity, but could be put to real world use, even on large datasets, at a reasonable cost.

��һ�����������������ƶ���Ƕ��ʽ�豸���ƶ������ǵ��㷨��Ч�ʺ���Ҫ�������������ǵĵ������ڴ�ʹ�á�ֵ��ע����ǣ����ǰ�����������صĿ��Dzŵó��˱����г��ֵ���ȼܹ���ƣ������ǵ�����Ϊ�����ȷ�ʡ����ڴ����ʵ����˵��ģ�ͱ����Ϊ��һ���ƶ��б���15�ڳ˼ӵļ���Ԥ�㣬�����������Dz��ǵ�����ѧ�������ģ�����������ʵ������Ӧ�ã��������Ժ����Ĵ����ڴ������ݼ���ʹ�á�

In this paper, we will focus on an efficient deep neural network architecture for computer vision, codenamed Inception, which derives its name from the Network in network paper by Lin et al [12] in conjunction with the famous ��we need to go deeper�� internet meme [1]. In our case, the word ��deep�� is used in two different meanings: first of all, in the sense that we introduce a new level of organization in the form of the ��Inception module�� and also in the more direct sense of increased network depth. In general, one can view the Inception model as a logical culmination of [12] while taking inspiration and guidance from the theoretical work by Arora et al [2]. The benefits of the architecture are experimentally verified on the ILSVRC 2014 classification and detection challenges, where it significantly outperforms the current state of the art.

�ڱ����У����ǽ���עһ����Ч�ļ�����Ӿ����������ܹ�������ΪInception����������������Lin����[12]���������е�Network�������ġ�we need to go deeper����������[1]�Ľ�ϡ������ǵİ����У����ʡ�deep������������ͬ�ĺ����У����ȣ���ij�������ϣ������ԡ�Inception module������ʽ������һ���²�ε���֯��ʽ���ڸ�ֱ�ӵ��������������������ȡ�һ����˵������Inceptionģ�Ϳ�������[12]��������ͬʱ��Arora����[2]�����۹������ܵ��˹�������������ּܹ��ĺô���ILSVRC 2014����ͼ����ս����ͨ��ʵ��õ�����֤������������Ŀǰ�����ˮƽ��

2. Related Work

Starting with LeNet-5 [10], convolutional neural networks (CNN) have typically had a standard structure ���� stacked convolutional layers (optionally followed by contrast normalization and max-pooling) are followed by one or more fully-connected layers. Variants of this basic design are prevalent in the image classification literature and have yielded the best results to-date on MNIST, CIFAR and most notably on the ImageNet classification challenge [9, 21]. For larger datasets such as Imagenet, the recent trend has been to increase the number of layers [12] and layer size [21, 14], while using dropout [7] to address the problem of overfitting.

2. ���ڹ���

��LeNet-5 [10]��ʼ�����������磨CNN��ͨ����һ�����ṹ�����ѵ��ľ����㣨�������ѡ���жԱȹ�һ�������ػ���������һ��������ȫ���Ӳ㡣���������Ƶı�����ͼ������������У�����ĿǰΪֹ��MNIST��CIFAR��������ImageNet������ս����[9, 21]���Ѿ�ȡ������ѽ�������ڸ�������ݼ�����ImageNet��˵����������������Ӳ����Ŀ[12]�Ͳ�Ĵ�С[21, 14]��ͬʱʹ�ö���[7]�������������⡣

Despite concerns that max-pooling layers result in loss of accurate spatial information, the same convolutional network architecture as [9] has also been successfully employed for localization [9, 14], object detection [6, 14, 18, 5] and human pose estimation [19].

���ܵ������ػ��������ȷ�ռ���Ϣ����ʧ������[9]��ͬ�ľ�������ṹҲ�Ѿ��ɹ���Ӧ���ڶ�λ[9, 14]��Ŀ����[6, 14, 18, 5]��������̬����[19]��

Inspired by a neuroscience model of the primate visual cortex, Serre et al. [15] used a series of fixed Gabor filters of different sizes to handle multiple scales. We use a similar strategy here. However, contrary to the fixed 2-layer deep model of [15], all filters in the Inception architecture are learned. Furthermore, Inception layers are repeated many times, leading to a 22-layer deep model in the case of the GoogLeNet model.

���鳤���Ӿ�Ƥ����ѧģ�͵�������Serre����[15]ʹ����һϵ�й̶��IJ�ͬ��С��Gabor�˲�����������߶ȡ�����ʹ��һ�������ƵIJ��ԡ�Ȼ������[15]�Ĺ̶���2�����ģ���෴��Inception�ṹ�����е��˲�����ѧϰ���ġ����⣬Inception���ظ��˺ܶ�Σ���GoogLeNetģ���еõ���һ��22������ģ�͡�

Network-in-Network is an approach proposed by Lin et al. [12] in order to increase the representational power of neural networks. In their model, additional 1 �� 1 convolutional layers are added to the network, increasing its depth. We use this approach heavily in our architecture. However, in our setting, 1 �� 1 convolutions have dual purpose: most critically, they are used mainly as dimension reduction modules to remove computational bottlenecks, that would otherwise limit the size of our networks. This allows for not just increasing the depth, but also the width of our networks without a significant performance penalty.

Network-in-Network��Lin����[12]Ϊ��������������������������һ�ַ����������ǵ�ģ���У������������˶����1 �� 1�����㣬�������������ȡ����ǵļܹ��д�����ʹ����������������ǣ������ǵ������У�1 �� 1����������Ŀ�ģ���ؼ����ǣ�������Ҫ��������Ϊ��άģ�����Ƴ�����ƿ��������������������Ĵ�С���ⲻ����������ȵ����ӣ�����������������Ŀ������ӵ�û�����Ե�������ʧ��

Finally, the current state of the art for object detection is the Regions with Convolutional Neural Networks (R-CNN) method by Girshick et al. [6]. R-CNN decomposes the overall detection problem into two subproblems: utilizing low-level cues such as color and texture in order to generate object location proposals in a category-agnostic fashion and using CNN classifiers to identify object categories at those locations. Such a two stage approach leverages the accuracy of bounding box segmentation with low-level cues, as well as the highly powerful classification power of state-of-the-art CNNs. We adopted a similar pipeline in our detection submissions, but have explored enhancements in both stages, such as multi-box [5] prediction for higher object bounding box recall, and ensemble approaches for better categorization of bounding box proposals.

���Ŀǰ��õ�Ŀ������Girshick����[6]�Ļ�������ľ��������磨R-CNN��������R-CNN�������������ֽ�Ϊ���������⣺���õͲ�ε��ź�������ɫ�������Կ����ķ�ʽ������Ŀ��λ�ú�ѡ����Ȼ����CNN��������ʶ����Щλ���ϵĶ����������һ�������εķ��������˵Ͳ������ָ�߽���ȷ�ԣ�Ҳ������Ŀǰ��CNN�dz�ǿ��ķ������������������ǵļ���ύ�в��������Ƶķ�ʽ����̽����ǿ�������Σ�������ڸ��ߵ�Ŀ��߽���ٻ�ʹ�ö��[5]Ԥ�⣬���ں��˸��õı߽���ѡ����������

3. Motivation and High Level Considerations

The most straightforward way of improving the performance of deep neural networks is by increasing their size. This includes both increasing the depth ���� the number of network levels ���� as well as its width: the number of units at each level. This is an easy and safe way of training higher quality models, especially given the availability of a large amount of labeled training data. However, this simple solution comes with two major drawbacks.

Bigger size typically means a larger number of parameters, which makes the enlarged network more prone to overfitting, especially if the number of labeled examples in the training set is limited. This is a major bottleneck as strongly labeled datasets are laborious and expensive to obtain, often requiring expert human raters to distinguish between various fine-grained visual categories such as those in ImageNet (even in the 1000-class ILSVRC subset) as shown in Figure 1.

Figure 1: Two distinct classes from the 1000 classes of the ILSVRC 2014 classification challenge. Domain knowledge is required to distinguish between these classes.

3. �����߲�˼��

������������������ֱ�ӵķ�ʽ���������ǵijߴ硣�ⲻ������������ȡ��������ε���Ŀ����Ҳ�������Ŀ��ȣ�ÿһ��ĵ�Ԫ��Ŀ������һ��ѵ����������ģ�������Ұ�ȫ�ķ������������ڿɻ�ô�����ע��ѵ�����ݵ�����¡��������������������Ҫ��ȱ�㡣����ijߴ�ͨ����ζ�Ÿ���IJ��������ʹ����������������ϣ���������ѵ�����ı�ע������������¡�����һ����Ҫ��ƿ������ΪҪ���ǿ��ע���ݼ���ʱ�����Ҵ��۰�������Ҫר����ί�ڸ���ϸ���ȵ��Ӿ����������֣�����ͼ1����ʾ��ImageNet�е����������1000��ILSVRC���Ӽ�����

ͼ1: ILSVRC 2014������ս����1000����������ͬ�����������Щ�����Ҫ����֪ʶ��

The other drawback of uniformly increased network size is the dramatically increased use of computational resources. For example, in a deep vision network, if two convolutional layers are chained, any uniform increase in the number of their filters results in a quadratic increase of computation. If the added capacity is used inefficiently (for example, if most weights end up to be close to zero), then much of the computation is wasted. As the computational budget is always finite, an efficient distribution of computing resources is preferred to an indiscriminate increase of size, even when the main objective is to increase the quality of performance.

������������ߴ����һ��ȱ���Ǽ�����Դʹ�õ��������ӡ����磬��һ������Ӿ������У�����������������������ǵ��˲�����Ŀ���κξ������Ӷ������������ƽ��ʽ�����ӡ�������ӵ�����ʹ��ʱЧ�ʵ��£����磬��������Ȩ�ؽ���ʱ�ӽ���0������ô���˷Ѵ����ļ������������ڼ���Ԥ���������ģ�������Դ����Ч�ֲ���ƫ���ڳߴ��������ӣ���ʹ��ҪĿ�����������ܵ�������

A fundamental way of solving both of these issues would be to introduce sparsity and replace the fully connected layers by the sparse ones, even inside the convolutions. Besides mimicking biological systems, this would also have the advantage of firmer theoretical underpinnings due to the groundbreaking work of Arora et al. [2]. Their main result states that if the probability distribution of the dataset is representable by a large, very sparse deep neural network, then the optimal network topology can be constructed layer after layer by analyzing the correlation statistics of the preceding layer activations and clustering neurons with highly correlated outputs. Although the strict mathematical proof requires very strong conditions, the fact that this statement resonates with the well known Hebbian principle ���� neurons that fire together, wire together ���� suggests that the underlying idea is applicable even under less strict conditions, in practice.

��������������һ�������ķ�ʽ��������ϡ���Բ���ȫ���Ӳ��滻Ϊϡ���ȫ���Ӳ㣬�����Ǿ����㡣����ģ������ϵͳ֮�⣬����Arora����[2]�Ŀ����Թ�������Ҳ���и���̵����ۻ������ơ����ǵ���Ҫ�ɹ�˵��������ݼ��ĸ��ʷֲ�����ͨ��һ������ϡ�������������ʾ�������ŵ��������˽ṹ����ͨ������ǰһ�㼤��������ͳ�ƺ;���߶���ص���Ԫ��һ���Ĺ�������Ȼ�ϸ����ѧ֤����Ҫ�ں�ǿ�������£�����ʵ����������������ĺղ����۲�������������Ԫһ����һ�����ӡ���ʵ�������������������������ڲ��ϸ�������¡�

Unfortunately, today��s computing infrastructures are very inefficient when it comes to numerical calculation on non-uniform sparse data structures. Even if the number of arithmetic operations is reduced by 100��, the overhead of lookups and cache misses would dominate: switching to sparse matrices might not pay off. The gap is widened yet further by the use of steadily improving and highly tuned numerical libraries that allow for extremely fast dense matrix multiplication, exploiting the minute details of the underlying CPU or GPU hardware [16, 9]. Also, non-uniform sparse models require more sophisticated engineering and computing infrastructure. Most current vision oriented machine learning systems utilize sparsity in the spatial domain just by the virtue of employing convolutions. However, convolutions are implemented as collections of dense connections to the patches in the earlier layer. ConvNets have traditionally used random and sparse connection tables in the feature dimensions since [11] in order to break the symmetry and improve learning, yet the trend changed back to full connections with [9] in order to further optimize parallel computation. Current state-of-the-art architectures for computer vision have uniform structure. The large number of filters and greater batch size allows for the efficient use of dense computation.

�ź����ǣ��������ڷǾ��ȵ�ϡ�����ݽṹ�Ͻ�����ֵ����ʱ�����ڵļ���ܹ�Ч�ʷdz����¡���ʹ�㷨�������������100������ѯ�ͻ��涪ʧ�ϵĿ�����ռ������λ���л���ϡ���������Dz����еġ������ȶ������߶ȵ�������ֵ���Ӧ�ã�������ڽ�һ��������ֵ��Ҫ�ȿ����ܼ��ľ���˷������õײ��CPU��GPUӲ��[16, 9]��Сϸ�ڡ��Ǿ��ȵ�ϡ��ģ��ҲҪ�����ĸ��ӹ��̺ͼ�������ṹ��Ŀǰ����������Ӿ��Ļ���ѧϰϵͳͨ�����þ������ŵ������ÿ����ϡ���ԡ�Ȼ����������ʵ��Ϊ����һ�����ܼ����ӵļ��ϡ�Ϊ�˴��ƶԳ��ԣ����ѧϰˮƽ��������[11]��ʼ��ConvNetsϰ����������ά��ʹ�������ϡ�����ӱ���Ȼ��Ϊ�˽�һ���Ż����м��㣬����[9]�������ڱ��ȫ���ӡ�Ŀǰ���µļ�����Ӿ��ܹ���ͳһ�Ľṹ��������˲������������СҪ���ܼ��������Чʹ�á�

This raises the question of whether there is any hope for a next, intermediate step: an architecture that makes use of filter-level sparsity, as suggested by the theory, but exploits our current hardware by utilizing computations on dense matrices. The vast literature on sparse matrix computations (e.g. [3]) suggests that clustering sparse matrices into relatively dense submatrices tends to give competitive performance for sparse matrix multiplication. It does not seem far-fetched to think that similar methods would be utilized for the automated construction of non-uniform deep-learning architectures in the near future.

���������һ���м䲽���Ƿ���ϣ�������⣺һ���ܹ��������˲���ˮƽ��ϡ���ԣ�������������Ϊ������������ͨ�������ܼ������������������Ŀǰ��Ӳ����ϡ�����˷��Ĵ������ף�����[3]����Ϊ����ϡ�����˷�����ϡ��������Ϊ����ܼ����Ӿ�����и��ѵ����ܡ��ڲ��õĽ������������Ƶķ��������зǾ������ѧϰ�ܹ����Զ��������������뷨�ƺ�����ǣǿ��

The Inception architecture started out as a case study for assessing the hypothetical output of a sophisticated network topology construction algorithm that tries to approximate a sparse structure implied by [2] for vision networks and covering the hypothesized outcome by dense, readily available components. Despite being a highly speculative undertaking, modest gains were observed early on when compared with reference networks based on [12]. With a bit of tuning the gap widened and Inception proved to be especially useful in the context of localization and object detection as the base network for [6] and [5]. Interestingly, while most of the original architectural choices have been questioned and tested thoroughly in separation, they turned out to be close to optimal locally. One must be cautious though: although the Inception architecture has become a success for computer vision, it is still questionable whether this can be attributed to the guiding principles that have lead to its construction. Making sure of this would require a much more thorough analysis and verification.

Inception�ܹ���ʼ����Ϊ�����о�����������һ�������������˹����㷨�ļ�����������㷨��ͼ����[2]����ʾ���Ӿ������ϡ��ṹ����ͨ���ܼ��ġ�����õ���������Ǽ�������������һ���dz�Ͷ�������飬�������[12]�IJο�������ȣ����ڿ��Թ۲�ʶȵ����档����һ�������ӿ���࣬��Ϊ[6]��[5]�Ļ������磬Inception��֤���ڶ�λ�����ĺ�Ŀ�������������á���Ȥ���ǣ���Ȼ���������ļܹ�ѡ���ѱ����ɲ����뿪����ȫ����ԣ������֤�������Ǿֲ����ŵġ�Ȼ���������������Inception�ܹ��ڼ����������ȡ�óɹ��������Ƿ���Թ����ڹ�����ܹ���ָ��ԭ�����������ʵġ�ȷ����һ�㽫��Ҫ�����ķ�������֤��

4. Architectural Details

The main idea of the Inception architecture is to consider how an optimal local sparse structure of a convolutional vision network can be approximated and covered by readily available dense components. Note that assuming translation invariance means that our network will be built from convolutional building blocks. All we need is to find the optimal local construction and to repeat it spatially. Arora et al. [2] suggests a layer-by-layer construction where one should analyze the correlation statistics of the last layer and cluster them into groups of units with high correlation. These clusters form the units of the next layer and are connected to the units in the previous layer. We assume that each unit from an earlier layer corresponds to some region of the input image and these units are grouped into filter banks. In the lower layers (the ones close to the input) correlated units would concentrate in local regions. Thus, we would end up with a lot of clusters concentrated in a single region and they can be covered by a layer of 1��1 convolutions in the next layer, as suggested in [12]. However, one can also expect that there will be a smaller number of more spatially spread out clusters that can be covered by convolutions over larger patches, and there will be a decreasing number of patches over larger and larger regions. In order to avoid patch-alignment issues, current incarnations of the Inception architecture are restricted to filter sizes 1��1, 3��3 and 5��5; this decision was based more on convenience rather than necessity. It also means that the suggested architecture is a combination of all those layers with their output filter banks concatenated into a single output vector forming the input of the next stage. Additionally, since pooling operations have been essential for the success of current convolutional networks, it suggests that adding an alternative parallel pooling path in each such stage should have additional beneficial effect, too (see Figure 2(a)).

4. �ܹ�ϸ��

Inception�ܹ�����Ҫ�뷨�ǿ����������ƾ����Ӿ����������ϡ��ṹ��������õ��ܼ�������и��ǡ�ע�����ת�������ԣ�����ζ�����ǵ����罫�Ծ���������Ϊ��������������Ҫ�������ҵ����ŵľֲ����첢�ڿռ����ظ�����Arora����[2]�����һ����νṹ������Ӧ�÷������һ������ͳ�Ʋ������Ǿۼ��ɾ��и�����Եĵ�Ԫ�顣��Щ�����γ�����һ��ĵ�Ԫ����ǰһ��ĵ�Ԫ���ӡ����Ǽ��������ÿ����Ԫ����Ӧ������ijЩ��������Щ��Ԫ���ֳ��˲����顣�ڽϵ͵IJ㣨�ӽ�����IJ㣩��ص�Ԫ�����ھֲ�������ˣ���[12]��ʾ���������ջ���������༯���ڵ����������ǿ���ͨ����һ���1��1�����㸲�ǡ�Ȼ��Ҳ����Ԥ�ڣ������ڸ�С��Ŀ���ڸ���ռ�����չ�ľ��࣬����Ա�������ϵľ������ǣ���Խ��Խ��������Ͽ�����������½���Ϊ�˱����У�������⣬ĿǰInception�ܹ���ʽ���˲����ijߴ������1��1��3��3��5��5���������������ǻ��ڱ����Զ����DZ�Ҫ�ԡ���Ҳ��ζ������ļܹ���������Щ�����ϣ�������˲��������ӳɵ�����������γ�����һ�ε����롣���⣬���ڳػ���������Ŀǰ��������ijɹ�������Ҫ����˽�����ÿ�������Ľ�����һ������IJ��гػ�·��Ӧ��ҲӦ�þ��ж��������Ч������ͼ2(a)����

As these ��Inception modules�� are stacked on top of each other, their output correlation statistics are bound to vary: as features of higher abstraction are captured by higher layers, their spatial concentration is expected to decrease. This suggests that the ratio of 3��3 and 5��5 convolutions should increase as we move to higher layers.

������Щ��Inceptionģ�顱�ڱ˴˵Ķ����ѵ�����������ͳ�Ʊ�Ȼ�б仯�����ڽϸ߲�Ჶ��ϸߵij�����������ռ伯�ж�Ԥ�ƻ���١����������ת�Ƶ����߲㣬3��3��5��5�����ı���Ӧ�û����ӡ�

One big problem with the above modules, at least in this naive form, is that even a modest number of 5��5 convolutions can be prohibitively expensive on top of a convolutional layer with a large number of filters. This problem becomes even more pronounced once pooling units are added to the mix: the number of output filters equals to the number of filters in the previous stage. The merging of output of the pooling layer with outputs of the convolutional layers would lead to an inevitable increase in the number of outputs from stage to stage. While this architecture might cover the optimal sparse structure, it would do it very inefficiently, leading to a computational blow up within a few stages.

����ģ���һ�����������ھ��д����˲����ľ�����֮�ϣ���ʹ������5��5����Ҳ�����Ƿdz�����ģ�����������������ʽ����������⡣һ���ػ���Ԫ���ӵ�����У���������������ø����ԣ�����˲�������������ǰһ���˲������������ػ�������;���������ĺϲ��ᵼ����һ�ε���һ������������ɱ�������ӡ���Ȼ���ּܹ����ܻḲ������ϡ��ṹ��������dz���Ч�������ڼ������ڼ�������ը��

This leads to the second idea of the Inception architecture: judiciously reducing dimension wherever the computational requirements would increase too much otherwise. This is based on the success of embeddings: even low dimensional embeddings might contain a lot of information about a relatively large image patch. However, embeddings represent information in a dense, compressed form and compressed information is harder to process. The representation should be kept sparse at most places (as required by the conditions of [2]) and compress the signals only whenever they have to be aggregated en masse. That is, 1��1 convolutions are used to compute reductions before the expensive 3��3 and 5��5 convolutions. Besides being used as reductions, they also include the use of rectified linear activation making them dual-purpose. The final result is depicted in Figure 2(b).

�����Inception�ܹ��ĵڶ����뷨���ڼ���Ҫ�������̫��ĵط������ǵؼ���ά�ȡ����ǻ���Ƕ��ijɹ���������άǶ����ܰ����������ڽϴ�ͼ������Ϣ��Ȼ��Ƕ�����ܼ���ѹ����ʽ��ʾ��Ϣ����ѹ����Ϣ���Ѵ��������ֱ�ʾӦ���ڴ�����ط�����ϡ�裨����[2]��������Ҫ�����ҽ������DZ������ʱ��ѹ���źš�Ҳ����˵���ڰ����3��3��5��5����֮ǰ��1��1�����������㽵ά������������ά֮�⣬����Ҳ����ʹ������������Ԫʹ�����á����յĽ����ͼ2(b)��ʾ��

In general, an Inception network is a network consisting of modules of the above type stacked upon each other, with occasional max-pooling layers with stride 2 to halve the resolution of the grid. For technical reasons (memory efficiency during training), it seemed beneficial to start using Inception modules only at higher layers while keeping the lower layers in traditional convolutional fashion. This is not strictly necessary, simply reflecting some infrastructural inefficiencies in our current implementation.

ͨ����Inception������һ�����������͵�ģ�黥��ѵ���ɵ����磬ż�����в���Ϊ2�����ػ��㽫����ֱ��ʼ��롣���ڼ���ԭ��ѵ���������ڴ�Ч�ʣ���ֻ�ڸ��߲㿪ʼʹ��Inceptionģ����ڸ��Ͳ��Ա��ִ�ͳ�ľ�����ʽ�ƺ�������ġ��ⲻ�Ǿ��Ա�Ҫ�ģ�ֻ�Ƿ�ӳ������Ŀǰʵ���е�һЩ�����ṹЧ�ʵ��¡�

A useful aspect of this architecture is that it allows for increasing the number of units at each stage significantly without an uncontrolled blow-up in computational complexity at later stages. This is achieved by the ubiquitous use of dimensionality reduction prior to expensive convolutions with larger patch sizes. Furthermore, the design follows the practical intuition that visual information should be processed at various scales and then aggregated so that the next stage can abstract features from the different scales simultaneously.

�üܹ���һ�����õķ�������������������ÿ���εĵ�Ԫ�������������ں���Ľγ��ּ��㸴�ӶȲ��ܿ��Ƶı�ը�������ڳߴ�ϴ�Ŀ���а���ľ���֮ǰͨ���ձ�ʹ�ý�άʵ�ֵġ����⣬�����ѭ��ʵ��ֱ�������Ӿ���ϢӦ���ڲ�ͬ�ij߶��ϴ���Ȼ��ۺϣ�Ϊ������һ�ο��ԴӲ�ͬ�߶�ͬʱ����������

The improved use of computational resources allows for increasing both the width of each stage as well as the number of stages without getting into computational difficulties. One can utilize the Inception architecture to create slightly inferior, but computationally cheaper versions of it. We have found that all the available knobs and levers allow for a controlled balancing of computational resources resulting in networks that are 3��10�� faster than similarly performing networks with non-Inception architecture, however this requires careful manual design at this point.

������Դ�ĸ���ʹ����������ÿ���εĿ��Ⱥͽε����������������������������������Inception�ܹ������Բ�һЩ������ɱ����͵İ汾�����Ƿ������п��õĿ�������������Դ���ܿ�ƽ�⣬���������û��Inception�ṹ������ִ�������3��10������������һ������Ҫ��ϸ���ֶ���ơ�

5. GoogLeNet

By the ��GoogLeNet�� name we refer to the particular incarnation of the Inception architecture used in our submission for the ILSVRC 2014 competition. We also used one deeper and wider Inception network with slightly superior quality, but adding it to the ensemble seemed to improve the results only marginally. We omit the details of that network, as empirical evidence suggests that the influence of the exact architectural parameters is relatively minor. Table 1 illustrates the most common instance of Inception used in the competition. This network (trained with different image patch sampling methods) was used for 6 out of the 7 models in our ensemble.

5. GoogLeNet

ͨ����GoogLeNet��������֣������ᵽ����ILSVRC 2014�������ύ��ʹ�õ�Inception�ܹ�������������Ҳʹ����һ�������ʵĸ��������Inception���磬��������뵽������ƺ�ֻ������˽�������Ǻ����˸������ϸ�ڣ���Ϊ����֤�ݱ���ȷ�мܹ��IJ���Ӱ����Խ�С����1˵���˾�����ʹ�õ������Inceptionʵ����������磨�ò�ͬ��ͼ����������ѵ���ģ�ʹ�������������7��ģ���е�6����

All the convolutions, including those inside the Inception modules, use rectified linear activation. The size of the receptive field in our network is 224��224 in the RGB color space with zero mean. ��#3��3 reduce�� and ��#5��5 reduce�� stands for the number of 1��1 filters in the reduction layer used before the 3��3 and 5��5 convolutions. One can see the number of 1��1 filters in the projection layer after the built-in max-pooling in the pool proj column. All these reduction/projection layers use rectified linear activation as well.

���еľ�����ʹ�����������Լ������Inceptionģ���ڲ��ľ����������ǵ������и���Ұ���ھ�ֵΪ0��RGB��ɫ�ռ��У���С��224��224����#3��3 reduce���͡�#5��5 reduce����ʾ��3��3��5��5����֮ǰ����ά��ʹ�õ�1��1�˲�������������pool proj�п��Կ������õ����ػ�֮��ͶӰ����1��1�˲��������������е���Щ��ά/ͶӰ��Ҳ��ʹ���������������

The network was designed with computational efficiency and practicality in mind, so that inference can be run on individual devices including even those with limited computational resources, especially with low-memory footprint.The network is 22 layers deep when counting only layers with parameters (or 27 layers if we also count pooling). The overall number of layers (independent building blocks) used for the construction of the network is about 100. The exact number depends on how layers are counted by the machine learning infrastructure. The use of average pooling before the classifier is based on [12], although our implementation has an additional linear layer. The linear layer enables us to easily adapt our networks to other label sets, however it is used mostly for convenience and we do not expect it to have a major effect. We found that a move from fully connected layers to average pooling improved the top-1 accuracy by about 0.6%, however the use of dropout remained essential even after removing the fully connected layers.

�������ƿ����˼���Ч�ʺ�ʵ���ԣ�����ƶϿ��Ե������豸�����У�����������Щ������Դ�����豸�������ǵ��ڴ�ռ�õ��豸����ֻ�����в����IJ�ʱ��������22�㣨�������Ҳ����ػ�����27�㣩�����������ȫ���㣨���������飩����Ŀ��Լ��100��ȷ�е�����ȡ���ڻ���ѧϰ������ʩ�Բ�ļ��㷽ʽ��������֮ǰ��ƽ���ػ��ǻ���[12]�ģ��������ǵ�ʵ����һ����������Բ㡣���Բ�ʹ���ǵ������ܺ�������Ӧ�����ı�ǩ����������Ҫ��Ϊ�˷���ʹ�ã����Dz����������ش��Ӱ�졣���Ƿ��ִ�ȫ���Ӳ��Ϊƽ���ػ�������˴�Լtop-1 %0.6��ȷ�ʣ�Ȼ����ʹ���Ƴ���ȫ���Ӳ�֮��ʧ��ʹ�û��DZز����ٵġ�

Given relatively large depth of the network, the ability to propagate gradients back through all the layers in an effective manner was a concern. The strong performance of shallower networks on this task suggests that the features produced by the layers in the middle of the network should be very discriminative. By adding auxiliary classifiers connected to these intermediate layers, discrimination in the lower stages in the classifier was expected. This was thought to combat the vanishing gradient problem while providing regularization. These classifiers take the form of smaller convolutional networks put on top of the output of the Inception (4a) and (4d) modules. During training, their loss gets added to the total loss of the network with a discount weight (the losses of the auxiliary classifiers were weighted by 0.3). At inference time, these auxiliary networks are discarded. Later control experiments have shown that the effect of the auxiliary networks is relatively minor (around 0.5%) and that it required only one of them to achieve the same effect.

���������Խϴ�����磬��Ч�����ݶȷ���ͨ�����в��������һ�����⡣����������ϣ���dz�����ǿ�����ܱ��������в������������Ӧ���Ƿdz���ʶ�����ġ�ͨ�����������������ӵ���Щ�м�㣬���������ϵͽη��������б������ⱻ��Ϊ�����ṩ����ͬʱ�˷��ݶ���ʧ���⡣��Щ���������ý�С�����������ʽ��������Inception (4a)��Inception (4b)ģ������֮�ϡ���ѵ���ڼ䣬���ǵ���ʧ���ۿ�Ȩ�أ�������������ʧ��Ȩ����0.3���ӵ������������ʧ�ϡ����ƶ�ʱ����Щ�������类����������Ŀ���ʵ��������������Ӱ����Խ�С��Լ0.5����ֻ��Ҫ����һ������ȡ��ͬ����Ч����

The exact structure of the extra network on the side, including the auxiliary classifier, is as follows:

- An average pooling layer with 5��5 filter size and stride 3, resulting in an 4��4��512 output for the (4a), and 4��4��528 for the (4d) stage.

- A 1��1 convolution with 128 filters for dimension reduction and rectified linear activation.

- A fully connected layer with 1024 units and rectified linear activation.

- A dropout layer with 70% ratio of dropped outputs.

- A linear layer with softmax loss as the classifier (predicting the same 1000 classes as the main classifier, but removed at inference time).

A schematic view of the resulting network is depicted in Figure 3.

Figure 3: GoogLeNet network with all the bells and whistles.

�����������������ڵĸ�������ľ���ṹ���£�

- һ���˲�����С5��5������Ϊ3��ƽ���ػ��㣬����(4a)�ε����Ϊ4��4��512��(4d)�����Ϊ4��4��528��

- ����128���˲�����1��1���������ڽ�ά���������Լ��

- һ��ȫ���Ӳ㣬����1024����Ԫ���������Լ��

- ����70%����Ķ����㡣

- ʹ�ô���softmax��ʧ�����Բ���Ϊ����������Ϊ��������Ԥ��ͬ����1000�࣬�����ƶ�ʱ�Ƴ�����

���յ�����ģ��ͼ��ͼ3��ʾ��

ͼ3�����е����нṹ��GoogLeNet���硣

6. Training Methodology

GoogLeNet networks were trained using the DistBelief [4] distributed machine learning system using modest amount of model and data-parallelism. Although we used a CPU based implementation only, a rough estimate suggests that the GoogLeNet network could be trained to convergence using few high-end GPUs within a week, the main limitation being the memory usage. Our training used asynchronous stochastic gradient descent with 0.9 momentum [17], fixed learning rate schedule (decreasing the learning rate by 4% every 8 epochs). Polyak averaging [13] was used to create the final model used at inference time.

6. ѵ������

GoogLeNet����ʹ��DistBelief[4]�ֲ�ʽ����ѧϰϵͳ����ѵ������ϵͳʹ��������ģ�ͺ����ݲ��С��������ǽ�ʹ��һ������CPU��ʵ�֣������ԵĹ��Ʊ���GoogLeNet��������ø��ٵĸ߶�GPU��һ��֮��ѵ������������Ҫ���������ڴ�ʹ�á����ǵ�ѵ��ʹ���첽����ݶ��½�����������Ϊ0.9[17]���̶���ѧϰ�ʼƻ���ÿ8�α����½�ѧϰ��4%����Polyakƽ��[13]���ƶ�ʱ�����������յ�ģ�͡�

Image sampling methods have changed substantially over the months leading to the competition, and already converged models were trained on with other options, sometimes in conjunction with changed hyperparameters, such as dropout and the learning rate. Therefore, it is hard to give a definitive guidance to the most effective single way to train these networks. To complicate matters further, some of the models were mainly trained on smaller relative crops, others on larger ones, inspired by [8]. Still, one prescription that was verified to work very well after the competition, includes sampling of various sized patches of the image whose size is distributed evenly between 8% and 100% of the image area with aspect ratio constrained to the interval [34,43] [ 3 4 , 4 3 ] . Also, we found that the photometric distortions of Andrew Howard [8] were useful to combat overfitting to the imaging conditions of training data.

ͼ����������ڹ�ȥ�����µľ����з������ش�仯��������������ģ��������ѡ���Ͻ�����ѵ������ʱ������ų������ĸı䣬���綪����ѧϰ�ʡ���ˣ����Ѷ�ѵ����Щ���������Ч�ĵ�һ��ʽ������ȷָ��������������ӵ��ǣ���[8]��������һЩģ����Ҫ������Խ�С�IJü�ͼ�����ѵ��������ģ����Ҫ������Խϴ�IJü�ͼ���Ͻ���ѵ����Ȼ����һ��������֤�ķ����ھ��������غܺã��������ֳߴ��ͼ���IJ��������ijߴ���ȷֲ���ͼ�������8%����100%֮�䣬���������Ϊ [34,43] [ 3 4 , 4 3 ] ֮�䡣���⣬���Ƿ���Andrew Howard[8]�Ĺ��Ť�����ڿ˷�ѵ�����ݳ��������Ĺ���������õġ�

7. ILSVRC 2014 Classification Challenge Setup and Results

The ILSVRC 2014 classification challenge involves the task of classifying the image into one of 1000 leaf-node categories in the Imagenet hierarchy. There are about 1.2 million images for training, 50,000 for validation and 100,000 images for testing. Each image is associated with one ground truth category, and performance is measured based on the highest scoring classifier predictions. Two numbers are usually reported: the top-1 accuracy rate, which compares the ground truth against the first predicted class, and the top-5 error rate, which compares the ground truth against the first 5 predicted classes: an image is deemed correctly classified if the ground truth is among the top-5, regardless of its rank in them. The challenge uses the top-5 error rate for ranking purposes.

7. ILSVRC 2014������ս�����úͽ��

ILSVRC 2014������ս��������ͼ����ൽImageNet�㼶��1000��Ҷ�ӽ����������ѵ��ͼ���Լ��120���ţ���֤ͼ����5���ţ�����ͼ����10���š�ÿһ��ͼ����һ��ʵ���������������ܶ������ڷ�����Ԥ�����߷֡�ͨ�������������֣�top-1ȷ�ʣ��Ƚ�ʵ�����͵�һ��Ԥ�����top-5�����ʣ��Ƚ�ʵ�������ǰ5��Ԥ��������ͼ��ʵ�������top-5�У�����Ϊͼ�������ȷ����������top-5�е���������ս��ʹ��top-5������������������

We participated in the challenge with no external data used for training. In addition to the training techniques aforementioned in this paper, we adopted a set of techniques during testing to obtain a higher performance, which we describe next.

- We independently trained 7 versions of the same GoogLeNet model (including one wider version), and performed ensemble prediction with them. These models were trained with the same initialization (even with the same initial weights, due to an oversight) and learning rate policies. They differed only in sampling methodologies and the randomized input image order.

- During testing, we adopted a more aggressive cropping approach than that of Krizhevsky et al. [9]. Specifically, we resized the image to 4 scales where the shorter dimension (height or width) is 256, 288, 320 and 352 respectively, take the left, center and right square of these resized images (in the case of portrait images, we take the top, center and bottom squares). For each square, we then take the 4 corners and the center 224��224 crop as well as the square resized to 224��224, and their mirrored versions. This leads to 4��3��6��2 = 144 crops per image. A similar approach was used by Andrew Howard [8] in the previous year��s entry, which we empirically verified to perform slightly worse than the proposed scheme. We note that such aggressive cropping may not be necessary in real applications, as the benefit of more crops becomes marginal after a reasonable number of crops are present (as we will show later on).

- The softmax probabilities are averaged over multiple crops and over all the individual classifiers to obtain the final prediction. In our experiments we analyzed alternative approaches on the validation data, such as max pooling over crops and averaging over classifiers, but they lead to inferior performance than the simple averaging.

���DzμӾ���ʱû��ʹ���ⲿ������ѵ�������˱�����ǰ���ᵽ��ѵ������֮�⣬�����ڻ�ø������ܵIJ����в�����һϵ�м��ɣ��������¡�

- ���Ƕ���ѵ����7���汾����ͬ��GoogLeNetģ�ͣ�����һ�����㷺�İ汾�����������ǽ���������Ԥ�⡣��Щģ�͵�ѵ��������ͬ�ij�ʼ��������������ͬ�ij�ʼȨ�أ����ڼල����ѧϰ�ʲ��ԡ����ǽ��ڲ����������������ͼ��˳���治ͬ��

- �ڲ����У����Dz��ñ�Krizhevsky����[9]�������IJü�������������˵�����ǽ�ͼ���һ��Ϊ�ĸ��߶ȣ����н϶�ά�ȣ��߶Ȼ���ȣ��ֱ�Ϊ256��288��320��352��ȡ��Щ��һ����ͼ������У��ҷ��飨��Ф��ͼƬ�У����Dz��ö��������ĺ͵ײ����飩������ÿ�����飬���ǽ�����4�����Լ�����224��224�ü�ͼ���Լ�����ߴ��һ��Ϊ224��224���Լ����ǵľ���汾�����ÿ��ͼ���õ�4��3��6��2 = 144�IJü�ͼ��ǰһ��������У�Andrew Howard[8]���������Ƶķ�������������ʵ֤��֤���䷽���Բ�����������ķ���������ע�����ʵ��Ӧ���У����ֻ����ü������Dz���Ҫ�ģ���Ϊ���ں��������IJü�ͼ�����ü�ͼ��ĺô����ú�С���������Ǻ���չʾ����������

- softmax�����ڶ���ü�ͼ���Ϻ����е����������Ͻ���ƽ����Ȼ��������Ԥ�⡣�����ǵ�ʵ���У����Ƿ�������֤���ݵ��������������ü�ͼ���ϵ����ػ��ͷ�������ƽ�����������DZȼ�ƽ����������ѷ��

In the remainder of this paper, we analyze the multiple factors that contribute to the overall performance of the final submission.

�ڱ��ĵ����ಿ�֣����Ƿ����������������ύ�������ܵĶ�����ء�

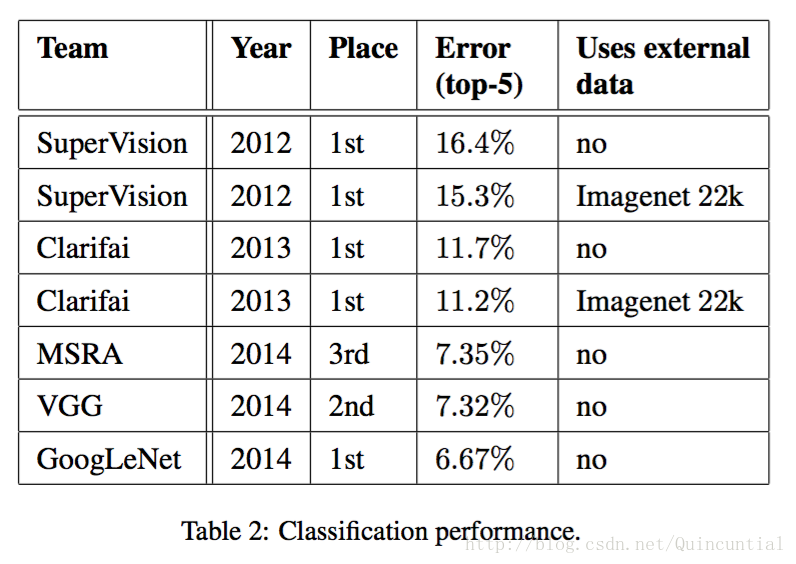

Our final submission to the challenge obtains a top-5 error of 6.67% on both the validation and testing data, ranking the first among other participants. This is a 56.5% relative reduction compared to the SuperVision approach in 2012, and about 40% relative reduction compared to the previous year��s best approach (Clarifai), both of which used external data for training the classifiers. Table 2 shows the statistics of some of the top-performing approaches over the past 3 years.

���������ǵ������ύ����֤���Ͳ��Լ��ϵõ���top-5 6.67%�Ĵ����ʣ��������IJ�������������һ����2012���SuperVision���������Լ�����56.5%����ǰһ�����ѷ�����Clarifai�������Լ�����Լ40%�������ַ�����ʹ�����ⲿ����ѵ������������2��ʾ�˹�ȥ������һЩ������õķ�����ͳ�ơ�

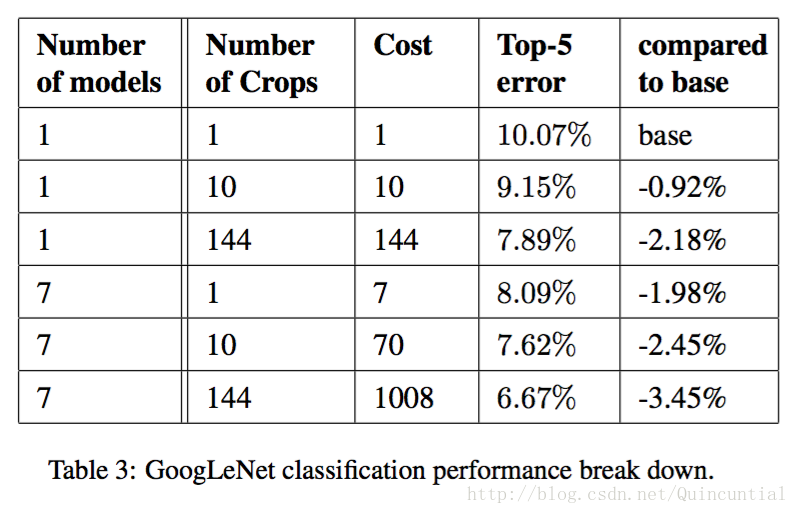

We also analyze and report the performance of multiple testing choices, by varying the number of models and the number of crops used when predicting an image in Table 3. When we use one model, we chose the one with the lowest top-1 error rate on the validation data. All numbers are reported on the validation dataset in order to not overfit to the testing data statistics.

����Ҳ���������˶��ֲ���ѡ������ܣ���Ԥ��ͼ��ʱͨ���ı��3��ʹ�õ�ģ����Ŀ�Ͳü�ͼ����Ŀ��

8. ILSVRC 2014 Detection Challenge Setup and Results

The ILSVRC detection task is to produce bounding boxes around objects in images among 200 possible classes. Detected objects count as correct if they match the class of the groundtruth and their bounding boxes overlap by at least 50% (using the Jaccard index). Extraneous detections count as false positives and are penalized. Contrary to the classification task, each image may contain many objects or none, and their scale may vary. Results are reported using the mean average precision (mAP). The approach taken by GoogLeNet for detection is similar to the R-CNN by [6], but is augmented with the Inception model as the region classifier. Additionally, the region proposal step is improved by combining the selective search [20] approach with multi-box [5] predictions for higher object bounding box recall. In order to reduce the number of false positives, the superpixel size was increased by 2��. This halves the proposals coming from the selective search algorithm. We added back 200 region proposals coming from multi-box [5] resulting, in total, in about 60% of the proposals used by [6], while increasing the coverage from 92% to 93%. The overall effect of cutting the number of proposals with increased coverage is a 1% improvement of the mean average precision for the single model case. Finally, we use an ensemble of 6 GoogLeNets when classifying each region. This leads to an increase in accuracy from 40% to 43.9%. Note that contrary to R-CNN, we did not use bounding box regression due to lack of time.

8. ILSVRC 2014�����ս�����úͽ��

ILSVRC���������Ϊ����200�����ܵ����������ͼ����Ŀ��ı߽��������Ķ���ƥ�������ʵ����������ǵı߽���ص�����50%��ʹ��Jaccard�����������Ķ����Ϊ��ȷ���صļ���Ϊ�������ұ��ͷ�������������෴��ÿ��ͼ����ܰ�����������û�ж��������ǵij߶ȿ����DZ仯�ġ�����Ľ��ʹ��ƽ�����Ⱦ�ֵ��mAP����GoogLeNet�����õķ���������R-CNN[6]������Inceptionģ����Ϊ�����������������ǿ�����⣬Ϊ�˸��ߵ�Ŀ��߽���ٻ��ʣ�ͨ��ѡ������[20]�����Ͷ���[5]Ԥ�����ϸĽ����������ɲ��衣Ϊ�˼��ټ����Ե����������ֱ��ʵijߴ�������2�����⽫ѡ�������㷨���������ɼ�����һ�롣�����ܹ�������200�����Զ�н�����������ɣ���Լ60%��������������[6]��ͬʱ�������ʴ�92%��ߵ�93%�������������ɵ����������Ӹ����ʵ�����Ӱ���Ƕ��ڵ���ģ�͵����ƽ�����Ⱦ�ֵ������1%����ȷ��������ʱ������ʹ����6��GoogLeNets����ϡ����ȷ�ʴ�40%��ߵ�43.9%��ע�⣬��R-CNN�෴������ȱ��ʱ������û��ʹ�ñ߽��ع顣

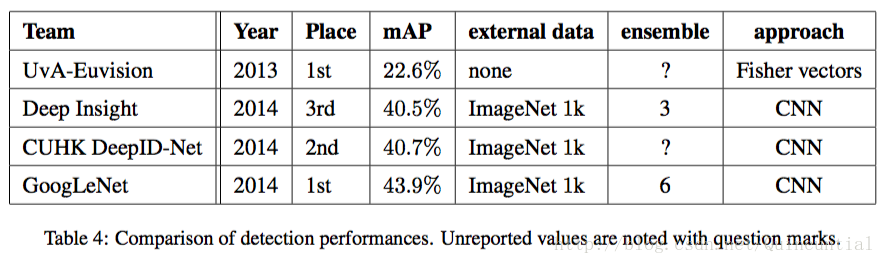

We first report the top detection results and show the progress since the first edition of the detection task. Compared to the 2013 result, the accuracy has almost doubled. The top performing teams all use convolutional networks. We report the official scores in Table 4 and common strategies for each team: the use of external data, ensemble models or contextual models. The external data is typically the ILSVRC12 classification data for pre-training a model that is later refined on the detection data. Some teams also mention the use of the localization data. Since a good portion of the localization task bounding boxes are not included in the detection dataset, one can pre-train a general bounding box regressor with this data the same way classification is used for pre-training. The GoogLeNet entry did not use the localization data for pretraining.

�������ȱ�������ü����������ʾ�˴ӵ�һ�������������Ľ�չ����2013��Ľ����ȣ�ȷ�ʼ�������һ�������б�����õ��ŶӶ�ʹ���˾������硣�����ڱ�4�б����˹ٷ��ķ�����ÿ������ij������ԣ�ʹ���ⲿ���ݡ�����ģ�ͻ�������ģ�͡��ⲿ����ͨ����ILSVRC12�ķ������ݣ�����Ԥѵ��ģ�ͣ������ڼ�����ݼ��Ͻ��и��ơ�һЩ�Ŷ�Ҳ�ᵽʹ�ö�λ���ݡ����ڶ�λ����ı߽��ܴ�һ���ֲ��ڼ�����ݼ��У����Կ����ø�����Ԥѵ��һ��ı߽��ع������������Ԥѵ���ķ�ʽ��ͬ��GoogLeNet����û��ʹ�ö�λ���ݽ���Ԥѵ����

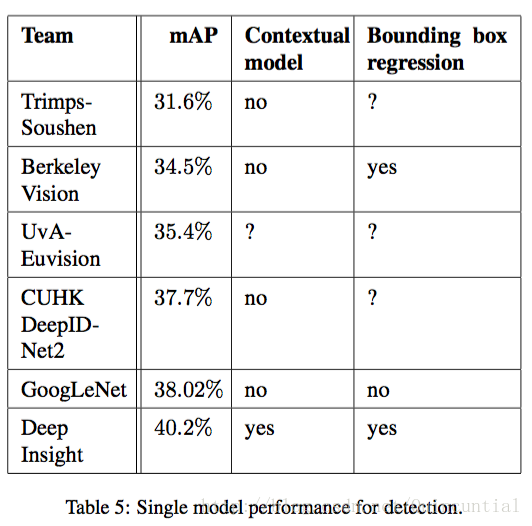

In Table 5, we compare results using a single model only. The top performing model is by Deep Insight and surprisingly only improves by 0.3 points with an ensemble of 3 models while the GoogLeNet obtains significantly stronger results with the ensemble.

�ڱ�5�У����ǽ��Ƚ��˵���ģ�͵Ľ�����������ģ����Deep Insight�ģ����˾��ȵ���3��ģ�͵ļ��Ͻ������0.3���㣬��GoogLeNet��ģ�ͼ���ʱ���Ի���˸��õĽ����

9. Conclusions

Our results yield a solid evidence that approximating the expected optimal sparse structure by readily available dense building blocks is a viable method for improving neural networks for computer vision. The main advantage of this method is a significant quality gain at a modest increase of computational requirements compared to shallower and narrower architectures.

9. �ܽ�

���ǵĽ��ȡ���˿ɿ���֤�ݣ���ͨ����õ��ܼ����������������������ϡ�����Ǹ��Ƽ�����Ӿ��������һ�ֿ��з���������ڽ�dz�ҽ�խ�ļܹ��������������Ҫ�������ڼ��������ʶ����ӵ���������������������档

Our object detection work was competitive despite not utilizing context nor performing bounding box regression, suggesting yet further evidence of the strengths of the Inception architecture.

���ǵ�Ŀ�������Ȼû�����������ģ�Ҳû��ִ�б߽��ع飬����Ȼ���о����������һ����ʾ��Inception�ܹ����Ƶ�֤�ݡ�

For both classification and detection, it is expected that similar quality of result can be achieved by much more expensive non-Inception-type networks of similar depth and width. Still, our approach yields solid evidence that moving to sparser architectures is feasible and useful idea in general. This suggest future work towards creating sparser and more refined structures in automated ways on the basis of [2], as well as on applying the insights of the Inception architecture to other domains.

���ڷ���ͼ�⣬Ԥ��ͨ���������������ȺͿ��ȵķ�Inception�����������ʵ�����������Ľ���� Ȼ�������ǵķ���ȡ���˿ɿ���֤�ݣ���ת���ϡ��Ľṹһ����˵�ǿ������õ��뷨�������δ���Ĺ�������[2]�Ļ��������Զ�����ʽ������ϡ�����ϸ�Ľṹ���Լ���Inception�ܹ���˼��Ӧ�õ���������

References

[1] Know your meme: We need to go deeper. http://knowyourmeme.com/memes/we-need-to-go-deeper. Accessed: 2014-09-15.

[2] S. Arora, A. Bhaskara, R. Ge, and T. Ma. Provable bounds for learning some deep representations. CoRR, abs/1310.6343, 2013.

[3] U. V. C ?atalyu ?rek, C. Aykanat, and B. Uc ?ar. On two-dimensional sparse matrix partitioning: Models, methods, and a recipe. SIAM J. Sci. Comput., 32(2):656�C683, Feb. 2010.

[4] J. Dean, G. Corrado, R. Monga, K. Chen, M. Devin, M. Mao, M. Ranzato, A. Senior, P. Tucker, K. Yang, Q. V. Le, and A. Y. Ng. Large scale distributed deep networks. In P. Bartlett, F. Pereira, C. Burges, L. Bottou, and K. Weinberger, editors, NIPS, pages 1232�C1240. 2012.

[5] D. Erhan, C. Szegedy, A. Toshev, and D. Anguelov. Scalable object detection using deep neural networks. In CVPR, 2014.

[6] R. B. Girshick, J. Donahue, T. Darrell, and J. Malik. Rich feature hierarchies for accurate object detection and semantic segmentation. In Computer Vision and Pattern Recognition, 2014. CVPR 2014. IEEE Conference on, 2014.

[7] G. E. Hinton, N. Srivastava, A. Krizhevsky, I. Sutskever, and R. Salakhutdinov. Improving neural networks by preventing co-adaptation of feature detectors. CoRR, abs/1207.0580, 2012.

[8] A. G. Howard. Some improvements on deep convolutional neural network based image classification. CoRR, abs/1312.5402, 2013.

[9] A. Krizhevsky, I. Sutskever, and G. Hinton. Imagenet classification with deep convolutional neural networks. In Advances in Neural Information Processing Systems 25, pages 1106�C1114, 2012.

[10] Y. LeCun, B. Boser, J. S. Denker, D. Henderson, R. E. Howard, W. Hubbard, and L. D. Jackel. Backpropagation applied to handwritten zip code recognition. Neural Comput., 1(4):541�C551, Dec. 1989.

[11] Y. LeCun, L. Bottou, Y. Bengio, and P. Haffner. Gradient-based learning applied to document recognition. Proceedings of the IEEE, 86(11):2278�C2324, 1998.

[12] M. Lin, Q. Chen, and S. Yan. Network in network. CoRR, abs/1312.4400, 2013.

[13] B. T. Polyak and A. B. Juditsky. Acceleration of stochastic approximation by averaging. SIAM J. Control Optim., 30(4):838�C855, July 1992.

[14] P. Sermanet, D. Eigen, X. Zhang, M. Mathieu, R. Fergus, and Y. LeCun. Overfeat: Integrated recognition, localization and detection using convolutional networks. CoRR, abs/1312.6229, 2013.

[15] T. Serre, L. Wolf, S. M. Bileschi, M. Riesenhuber, and T. Poggio. Robust object recognition with cortex-like mechanisms. IEEE Trans. Pattern Anal. Mach. Intell., 29(3):411�C426, 2007.

[16] F. Song and J. Dongarra. Scaling up matrix computations on shared-memory manycore systems with 1000 cpu cores. In Proceedings of the 28th ACM Interna- tional Conference on Supercomputing, ICS ��14, pages 333�C342, New York, NY, USA, 2014. ACM.

[17] I. Sutskever, J. Martens, G. E. Dahl, and G. E. Hinton. On the importance of initialization and momentum in deep learning. In ICML, volume 28 of JMLR Proceed- ings, pages 1139�C1147. JMLR.org, 2013.

[18] C.Szegedy,A.Toshev,andD.Erhan.Deep neural networks for object detection. In C. J. C. Burges, L. Bottou, Z. Ghahramani, and K. Q. Weinberger, editors, NIPS, pages 2553�C2561, 2013.

[19] A. Toshev and C. Szegedy. Deeppose: Human pose estimation via deep neural networks. CoRR, abs/1312.4659, 2013.

[20] K. E. A. van de Sande, J. R. R. Uijlings, T. Gevers, and A. W. M. Smeulders. Segmentation as selective search for object recognition. In Proceedings of the 2011 International Conference on Computer Vision, ICCV ��11, pages 1879�C1886, Washington, DC, USA, 2011. IEEE Computer Society.

[21] M. D. Zeiler and R. Fergus. Visualizing and understanding convolutional networks. In D. J. Fleet, T. Pajdla, B. Schiele, and T. Tuytelaars, editors, ECCV, volume 8689 of Lecture Notes in Computer Science, pages 818�C833. Springer, 2014.