���

��˵Hadoop 1.0.2/src/contrib/eclipse-pluginֻ�в����Դ���룬�������һ���Ҵ���õĶ�Ӧ��Eclipse�����

���ص�ַ

���غ��ӵ�eclipse/dropinsĿ¼�¼��ɣ���Ȼeclipse/pluginsҲ�ǿ��Եģ�ǰ�߸�Ϊ��㣬�Ƽ�������Eclipse����������ͼ(Perspective)�п���Map/Reduce��

����

�����ɫ��С��ͼ�꣬�½�һ��Hadoop���ӣ�

ע�⣬һ��Ҫ��д��ȷ������ijЩ�˿ڣ��Լ�Ĭ�����е��û�����

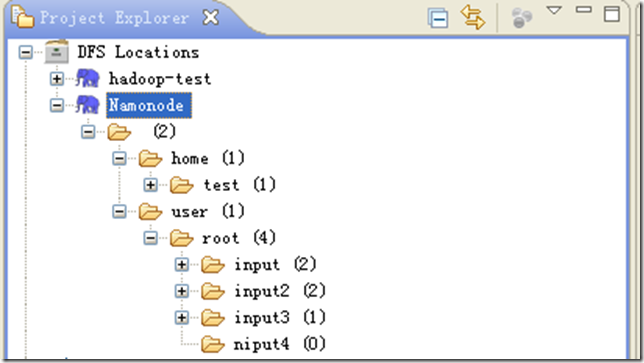

��������ã��ɼ�

��������£���������Ŀ������Կ���

�������������Ľ���HDFS�ֲ�ʽ�ļ�ϵͳ�Ĺ������ϴ���ɾ���Ȳ�����

Ϊ���������������Ҫ�Ƚ���һ��Ŀ¼ user/root/input2��Ȼ���ϴ�����txt�ļ�����Ŀ¼��

intput1.txt ��Ӧ���ݣ�Hello Hadoop Goodbye Hadoop

intput2.txt ��Ӧ���ݣ�Hello World Bye World

HDFS�����������ˣ�������Կ�ʼ�����ˡ�

Hadoop����

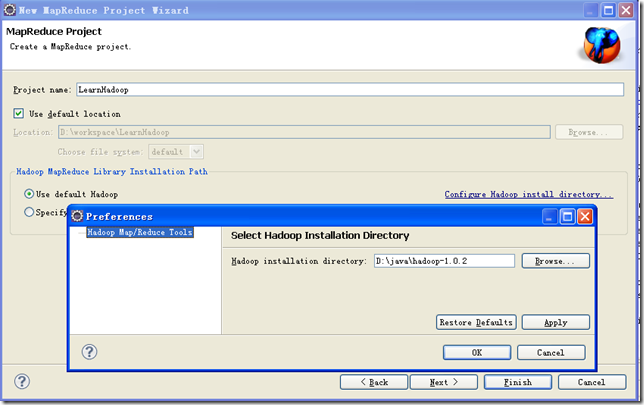

�½�һ��Map/Reduce Project���̣��趨�ñ��ص�hadoopĿ¼

�½�һ��������WordCountTest��

<script src="https://gist.github.com/2477347.js?file=WordCountTest.java"></script>

package com.hadoop.learn.test;import java.util.StringTokenizer;import org.apache.hadoop.conf.Configuration;import org.apache.hadoop.fs.Path;import org.apache.hadoop.io.IntWritable;import org.apache.hadoop.io.Text;import org.apache.hadoop.mapreduce.Job;import org.apache.hadoop.mapreduce.Mapper;import org.apache.hadoop.mapreduce.Reducer;import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;import org.apache.hadoop.util.GenericOptionsParser;/** * ���в��Գ��� * * @author yongboy * @date 2012-04-16 */public class WordCountTest { private static final Logger log = Logger.getLogger(WordCountTest.class); public static class TokenizerMapper extends Mapper<Object, Text, Text, IntWritable> { private final static IntWritable one = new IntWritable(1); private Text word = new Text(); public void map(Object key, Text value, Context context) log.info("Map key : " + key); log.info("Map value : " + value); StringTokenizer itr = new StringTokenizer(value.toString()); while (itr.hasMoreTokens()) { String wordStr = itr.nextToken(); word.set(wordStr); log.info("Map word : " + wordStr); context.write(word, one); } } } public static class IntSumReducer extends Reducer<Text, IntWritable, Text, IntWritable> { private IntWritable result = new IntWritable(); public void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException { log.info("Reduce key : " + key); log.info("Reduce value : " + values); int sum = 0; for (IntWritable val : values) { sum += val.get(); } result.set(sum); log.info("Reduce sum : " + sum); context.write(key, result); } } public static void main(String[] args) throws Exception { Configuration conf = new Configuration(); String[] otherArgs = new GenericOptionsParser(conf, args) .getRemainingArgs(); if (otherArgs.length != 2) { System.err.println("Usage: WordCountTest <in> <out>"); System.exit(2); } Job job = new Job(conf, "word count"); job.setJarByClass(WordCountTest.class); job.setMapperClass(TokenizerMapper.class); job.setCombinerClass(IntSumReducer.class); job.setReducerClass(IntSumReducer.class); job.setOutputKeyClass(Text.class); job.setOutputValueClass(IntWritable.class); FileInputFormat.addInputPath(job, new Path(otherArgs[0])); FileOutputFormat.setOutputPath(job, new Path(otherArgs[1])); System.exit(job.waitForCompletion(true) ? 0 : 1); }}�Ҽ���ѡ��Run Configurations��,�������ڣ������Arguments��ѡ�,�ڡ�Program argumetns����Ԥ���������:

hdfs://master:9000/user/root/input2 dfs://master:9000/user/root/output2

��ע������Ϊ���ڱ��ص���ʹ�ã�������ʵ������

Ȼ�����Apply����Ȼ��Close�������ڿ����Ҽ���ѡ��Run on Hadoop�������С�

����ʱ����������쳣��Ϣ��

12/04/24 15:32:44 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

12/04/24 15:32:44 ERROR security.UserGroupInformation: PriviledgedActionException as:Administrator cause:java.io.IOException: Failed to set permissions of path: \tmp\hadoop-Administrator\mapred\staging\Administrator-519341271\.staging to 0700

Exception in thread "main" java.io.IOException: Failed to set permissions of path: \tmp\hadoop-Administrator\mapred\staging\Administrator-519341271\.staging to 0700

??? at org.apache.hadoop.fs.FileUtil.checkReturnValue(FileUtil.java:682)

??? at org.apache.hadoop.fs.FileUtil.setPermission(FileUtil.java:655)

??? at org.apache.hadoop.fs.RawLocalFileSystem.setPermission(RawLocalFileSystem.java:509)

??? at org.apache.hadoop.fs.RawLocalFileSystem.mkdirs(RawLocalFileSystem.java:344)

??? at org.apache.hadoop.fs.FilterFileSystem.mkdirs(FilterFileSystem.java:189)

??? at org.apache.hadoop.mapreduce.JobSubmissionFiles.getStagingDir(JobSubmissionFiles.java:116)

??? at org.apache.hadoop.mapred.JobClient$2.run(JobClient.java:856)

??? at org.apache.hadoop.mapred.JobClient$2.run(JobClient.java:850)

??? at java.security.AccessController.doPrivileged(Native Method)

??? at javax.security.auth.Subject.doAs(Subject.java:396)

??? at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1093)

??? at org.apache.hadoop.mapred.JobClient.submitJobInternal(JobClient.java:850)

??? at org.apache.hadoop.mapreduce.Job.submit(Job.java:500)

??? at org.apache.hadoop.mapreduce.Job.waitForCompletion(Job.java:530)

??? at com.hadoop.learn.test.WordCountTest.main(WordCountTest.java:85)

�����Windows���ļ�Ȩ�����⣬��Linux�¿����������У����������������⡣

��������ǣ���/hadoop-1.0.2/src/core/org/apache/hadoop/fs/FileUtil.java�����checkReturnValue��ע�͵����ɣ���Щ�ֱ�����Window�£����Բ��ü�飩��

<script src="https://gist.github.com/2477544.js?file=FileUtil.java"></script>

......??private static void checkReturnValue(boolean rv, File p, ???????????????????????????????????????FsPermission permission???????????????????????????????????????) throws IOException {????/** if (!rv) { throw new IOException("Failed to set permissions of path: " + p + " to " + String.format("%04o", permission.toShort())); } **/??}......���±�����hadoop-core-1.0.2.jar���滻��hadoop-1.0.2��Ŀ¼�µ�hadoop-core-1.0.2.jar���ɡ�

�����ṩһ���İ��hadoop-core-1.0.2-modified.jar�ļ����滻ԭhadoop-core-1.0.2.jar���ɡ�

�滻֮��ˢ����Ŀ�����ú���ȷ��jar������������������WordCountTest�����ɡ�

�ɹ�֮����Eclipse��ˢ��HDFSĿ¼�����Կ���������ouput2Ŀ¼��

����� part-r-00000���ļ������Կ�����������

Bye??? 1

Goodbye??? 1

Hadoop??? 2

Hello??? 2

World??? 2

�ţ�һ����������Debug���Ըó������öϵ㣨�Ҽ� �C> Debug As �C > Java Application�������ɣ�ÿ������֮ǰ������Ҫ�յ�ɾ�����Ŀ¼����

���⣬�ò������eclipse��Ӧ��workspace\.metadata\.plugins\org.apache.hadoop.eclipse�£��Զ�����jar�ļ����Լ������ļ�������Haoop��һЩ�������õȡ�

�ţ�����ϸ�ڣ���������ɡ�

�������쳣

org.apache.hadoop.ipc.RemoteException: org.apache.hadoop.hdfs.server.namenode.SafeModeException: Cannot create directory /user/root/output2/_temporary. Name node is in safe mode.

The ratio of reported blocks 0.5000 has not reached the threshold 0.9990. Safe mode will be turned off automatically.

??? at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.mkdirsInternal(FSNamesystem.java:2055)

??? at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.mkdirs(FSNamesystem.java:2029)

??? at org.apache.hadoop.hdfs.server.namenode.NameNode.mkdirs(NameNode.java:817)

??? at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

??? at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:39)

??? at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:25)

??? at java.lang.reflect.Method.invoke(Method.java:597)

??? at org.apache.hadoop.ipc.RPC$Server.call(RPC.java:563)

??? at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:1388)

??? at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:1384)

??? at java.security.AccessController.doPrivileged(Native Method)

??? at javax.security.auth.Subject.doAs(Subject.java:396)

??? at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1093)

??? at org.apache.hadoop.ipc.Server$Handler.run(Server.java:1382)

�����ڵ㴦���رյ���ȫģʽ��

#bin/hadoop dfsadmin �Csafemode leave

���

��������Map/Reduce��Ŀ�����jar�����ܼ����飬������ԡ���֤jar�ļ���META-INF/MANIFEST.MF�ļ��д���Main-Classӳ�䣺

Main-Class: com.hadoop.learn.test.TestDriver

��ʹ�õ�������jar������ô��MANIFEST.MF������Class-Path���ˡ�

�����ʹ�ò���ṩ��MapReduce Driver������æ������Hadoop�����У�ֱ��ָ�������������ǰ������Map/Reduce��ҵʱ�������á�

һ��MapReduce DriverֻҪ����һ��main������ָ��������

<script src="https://gist.github.com/2498401.js?file=TestDriver.java"></script>

package com.hadoop.learn.test;import org.apache.hadoop.util.ProgramDriver;/** * * @author yongboy * @time 2012-4-24 * @version 1.0 */public class TestDriver { public static void main(String[] args) { int exitCode = -1; ProgramDriver pgd = new ProgramDriver(); try { pgd.addClass("testcount", WordCountTest.class, "A test map/reduce program that counts the words in the input files."); pgd.driver(args); exitCode = 0; } catch (Throwable e) { e.printStackTrace(); } System.exit(exitCode); }}������һ��С���ɣ�MapReduce Driver�����棬�Ҽ����У�Run on Hadoop������Eclipse��workspace\.metadata\.plugins\org.apache.hadoop.eclipseĿ¼���Զ�����jar�����ϴ���HDFS������Զ��hadoop��Ŀ¼�£�������:

# bin/hadoop jar LearnHadoop_TestDriver.java-460881982912511899.jar testcount input2 output3

OK�����Ľ�����