1.����hadoop2.6.0��eclipse���

����Դ�룺

git clone https://github.com/winghc/hadoop2x-eclipse-plugin.git

����Դ�룺

cd src/contrib/eclipse-plugin

ant jar -Dversion=2.6.0 -Declipse.home=/opt/eclipse -Dhadoop.home=/opt/hadoop-2.6.0

eclipse.home �� hadoop.home ���ó����Լ��Ļ���·��

������ִ�б��룬������8��������Ϣ��ֱ�Ӻ��ԡ�

compile:

[echo] contrib: eclipse-plugin

[javac] /software/hadoop2x-eclipse-plugin/src/contrib/eclipse-plugin/build.xml:76: warning: 'includeantruntime' was not set, defaulting to build.sysclasspath=last; set to false for repeatable builds

[javac] Compiling 45 source files to /software/hadoop2x-eclipse-plugin/build/contrib/eclipse-plugin/classes

[javac] /opt/hadoop-2.6.0/share/hadoop/common/hadoop-common-2.6.0.jar(org/apache/hadoop/fs/Path.class): warning: Cannot find annotation method 'value()' in type 'LimitedPrivate': class file for org.apache.hadoop.classification.InterfaceAudience not found

[javac] /opt/hadoop-2.6.0/share/hadoop/hdfs/hadoop-hdfs-2.6.0.jar(org/apache/hadoop/hdfs/DistributedFileSystem.class): warning: Cannot find annotation method 'value()' in type 'LimitedPrivate'

[javac] /opt/hadoop-2.6.0/share/hadoop/common/hadoop-common-2.6.0.jar(org/apache/hadoop/fs/FileSystem.class): warning: Cannot find annotation method 'value()' in type 'LimitedPrivate'

[javac] /opt/hadoop-2.6.0/share/hadoop/common/hadoop-common-2.6.0.jar(org/apache/hadoop/fs/FileSystem.class): warning: Cannot find annotation method 'value()' in type 'LimitedPrivate'

[javac] /opt/hadoop-2.6.0/share/hadoop/common/hadoop-common-2.6.0.jar(org/apache/hadoop/fs/FileSystem.class): warning: Cannot find annotation method 'value()' in type 'LimitedPrivate'

[javac] /opt/hadoop-2.6.0/share/hadoop/common/hadoop-common-2.6.0.jar(org/apache/hadoop/fs/FileSystem.class): warning: Cannot find annotation method 'value()' in type 'LimitedPrivate'

[javac] /opt/hadoop-2.6.0/share/hadoop/common/hadoop-common-2.6.0.jar(org/apache/hadoop/fs/FSDataInputStream.class): warning: Cannot find annotation method 'value()' in type 'LimitedPrivate'

[javac] /opt/hadoop-2.6.0/share/hadoop/common/hadoop-common-2.6.0.jar(org/apache/hadoop/fs/FSDataOutputStream.class): warning: Cannot find annotation method 'value()' in type 'LimitedPrivate'

[javac] Note: Some input files use or override a deprecated API.

[javac] Note: Recompile with -Xlint:deprecation for details.

[javac] Note: Some input files use unchecked or unsafe operations.

[javac] Note: Recompile with -Xlint:unchecked for details.

[javac] 8 warnings

����λ�ã�

[jar] Building jar: /software/hadoop2x-eclipse-plugin/build/contrib/eclipse-plugin/hadoop-eclipse-plugin-2.6.0.jar

2.��װ���

��¼�������Ҫ��eclipse���û������hadoop�Ĺ���Ա��Ҳ����hadoop��װʱ�������û����������־ܾ���дȨ�����⡣

���Ʊ���õ�jar��eclipse���Ŀ¼������eclipse

���� hadoop ��װĿ¼

window ->preference -> hadoop Map/Reduce -> Hadoop installation directory

����Map/Reduce ��ͼ

window ->Open Perspective -> other->Map/Reduce -> �����OK��

windows �� show view �� other->Map/Reduce Locations-> �����OK��

����̨����һ����Map/Reduce Locations����Tabҳ

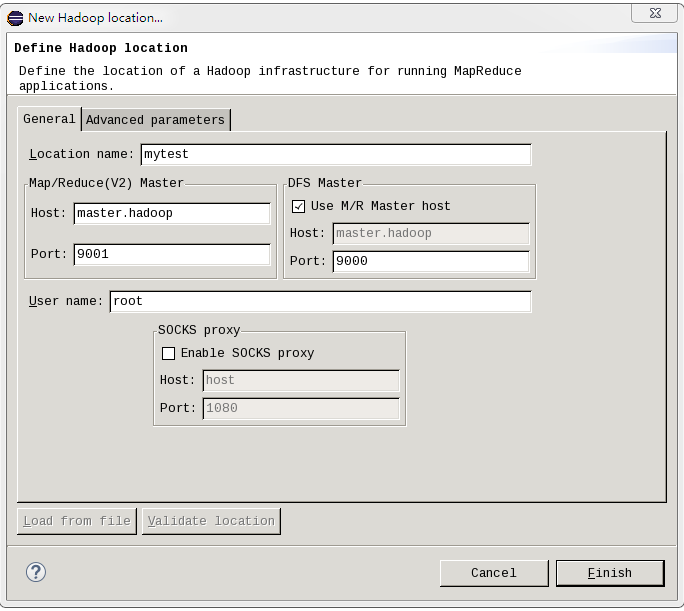

�ڡ�Map/Reduce Locations�� Tabҳ ���ͼ��<����+>�����ڿհĵط��Ҽ���ѡ��New Hadoop location�����������Ի���New hadoop location�����������������ݣ�

ע�⣺MR Master��DFS Master���ñ����mapred-site.xml��core-site.xml�������ļ�һ��

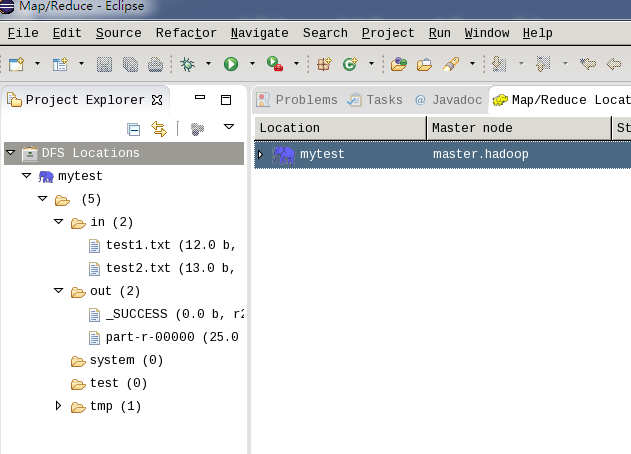

��Project Explorer,�鿴HDFS�ļ�ϵͳ��

3.�½�Map/Reduce����

File->New->project->Map/Reduce Project->Next

��дWordCount�ࣺ

package mytest;

import java.io.IOException;

import java.util.*;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.conf.*;

import org.apache.hadoop.io.*;

import org.apache.hadoop.mapred.*;

import org.apache.hadoop.util.*;

public class WordCount {

public static class Map extends MapReduceBase implements

Mapper<LongWritable, Text, Text, IntWritable> {

private final static IntWritable one = new IntWritable(1);

private Text word = new Text();

public void map(LongWritable key, Text value,

OutputCollector<Text, IntWritable> output, Reporter reporter)

throws IOException {

String line = value.toString();

StringTokenizer tokenizer = new StringTokenizer(line);

while (tokenizer.hasMoreTokens()) {

word.set(tokenizer.nextToken());

output.collect(word, one);

}

}

}

public static class Reduce extends MapReduceBase implements

Reducer<Text, IntWritable, Text, IntWritable> {

public void reduce(Text key, Iterator<IntWritable> values,

OutputCollector<Text, IntWritable> output, Reporter reporter)

throws IOException {

int sum = 0;

while (values.hasNext()) {

sum += values.next().get();

}

output.collect(key, new IntWritable(sum));

}

}

public static void main(String[] args) throws Exception {

JobConf conf = new JobConf(WordCount.class);

conf.setJobName("wordcount");

conf.setOutputKeyClass(Text.class);

conf.setOutputValueClass(IntWritable.class);

conf.setMapperClass(Map.class);

conf.setReducerClass(Reduce.class);

conf.setInputFormat(TextInputFormat.class);

conf.setOutputFormat(TextOutputFormat.class);

FileInputFormat.setInputPaths(conf, new Path(args[0]));

FileOutputFormat.setOutputPath(conf, new Path(args[1]));

JobClient.runJob(conf);

}

}

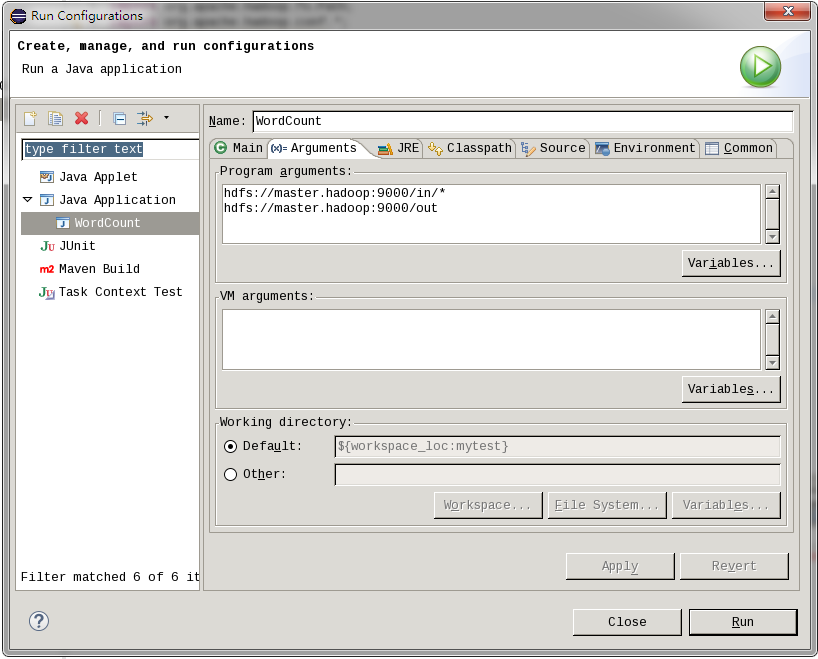

��������ʱ����:�Ҽ�-->Run as-->Run Confiugrations

in��hdfs���ļ��У��Լ��������������Ҫ�������ļ���out���������

���������hadoop��Ⱥ�����У��Ҽ�-->Runas -->Run on Hadoop,���յ�����������HDFS��Ӧ���ļ�������ʾ�����ˣ�Linux��hadoop-2.6.0 eclipse���������ɡ�

���ù����г��ȵ����⣺

��eclipse�������ļ�HDFS�ļ�ϵͳд������⣬�⽫ֱ�ӵ���eclipse�±�д�ij�������hadoop�����С�

��conf/hdfs-site.xml���ҵ�dfs.permissions������Ϊfalse��Ĭ��Ϊtrue��OK�ˡ�

<property>

<name>dfs.permissions</name>

<value>false</value>

</property>

������Ҫ����HDFS��

��ľ��Ǹղ�˵�ĵ�¼��������eclipse���û���������hadoop�Ĺ���Ա